G5BAIM Artificial Intelligence Methods Graham Kendall Neural Networks

47 Slides534.50 KB

G5BAIM Artificial Intelligence Methods Graham Kendall Neural Networks

G5BAIM Neural Networks Neural Networks AIMA – Chapter 19 Fundamentals of Neural Networks : Architectures, Algorithms and Applications. L, Fausett, 1994 An Introduction to Neural Networks (2nd Ed). Morton, IM, 1995

G5BAIM Neural Networks Neural Networks McCulloch & Pitts (1943) are generally recognised as the designers of the first neural network Many of their ideas still used today (e.g. many simple units combine to give increased computational power and the idea of a threshold)

G5BAIM Neural Networks Neural Networks Hebb (1949) developed the first learning rule (on the premise that if two neurons were active at the same time the strength between them should be increased)

G5BAIM Neural Networks Neural Networks During the 50’s and 60’s many researchers worked on the perceptron amidst great excitement. 1969 saw the death of neural network research for about 15 years – Minsky & Papert Only in the mid 80’s (Parker and LeCun) was interest revived (in fact Werbos discovered algorithm in 1974)

G5BAIM Neural Networks Neural Networks

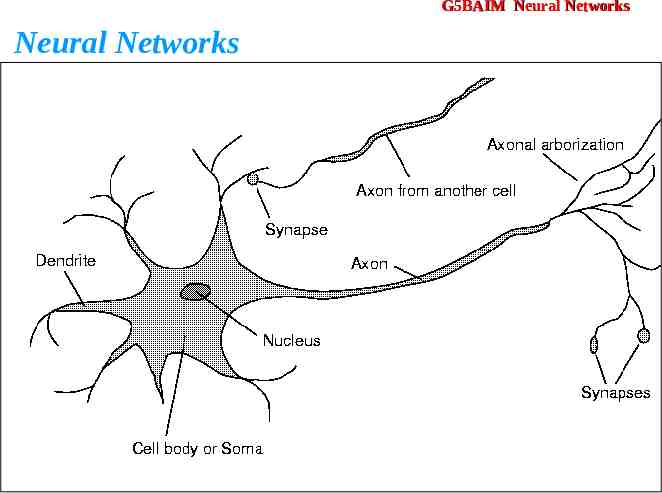

G5BAIM Neural Networks Neural Networks We are born with about 100 billion neurons A neuron may connect to as many as 100,000 other neurons

G5BAIM Neural Networks Neural Networks Signals “move” via electrochemical signals The synapses release a chemical transmitter – the sum of which can cause a threshold to be reached – causing the neuron to “fire” Synapses can be inhibitory or excitatory

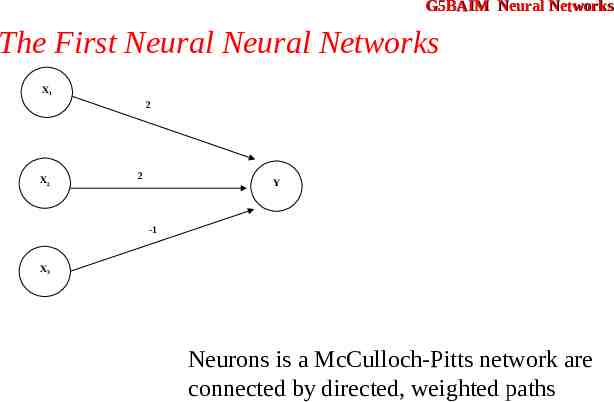

G5BAIM Neural Networks The First Neural Neural Networks McCulloch and Pitts produced the first neural network in 1943 Many of the principles can still be seen in neural networks of today

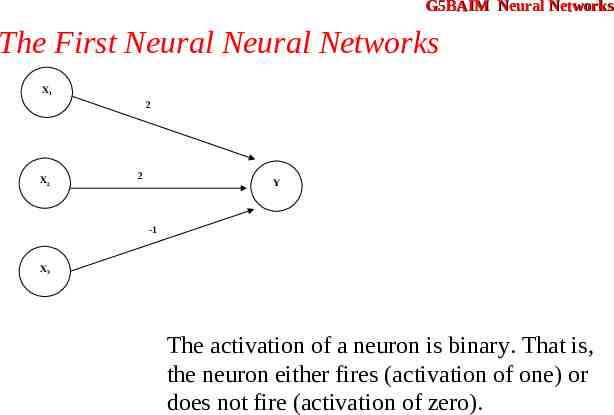

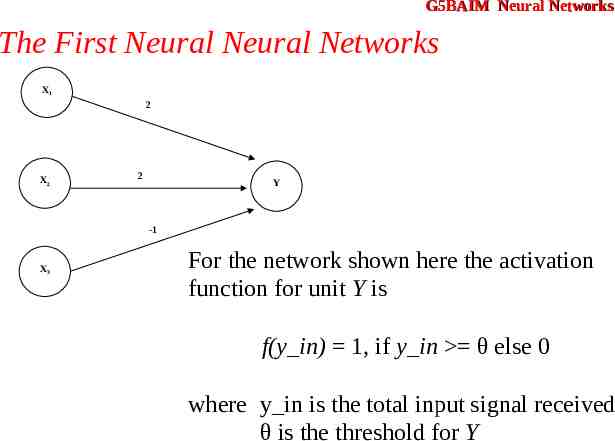

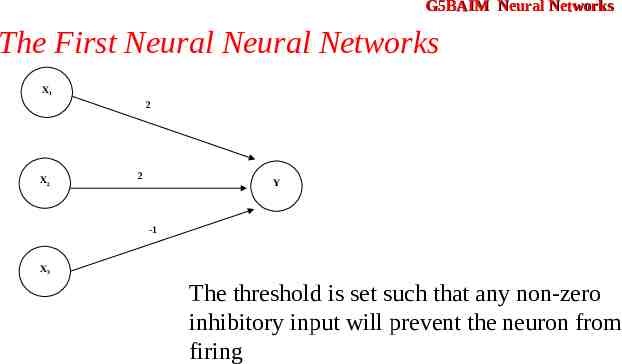

G5BAIM Neural Networks The First Neural Neural Networks X1 2 X2 2 Y -1 X3 The activation of a neuron is binary. That is, the neuron either fires (activation of one) or does not fire (activation of zero).

G5BAIM Neural Networks The First Neural Neural Networks X1 2 X2 2 Y -1 X3 For the network shown here the activation function for unit Y is f(y in) 1, if y in θ else 0 where y in is the total input signal received θ is the threshold for Y

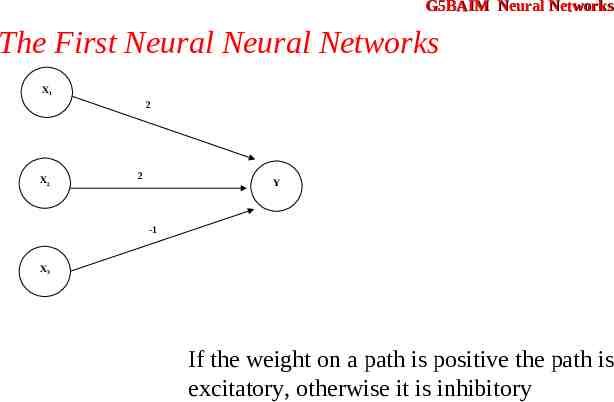

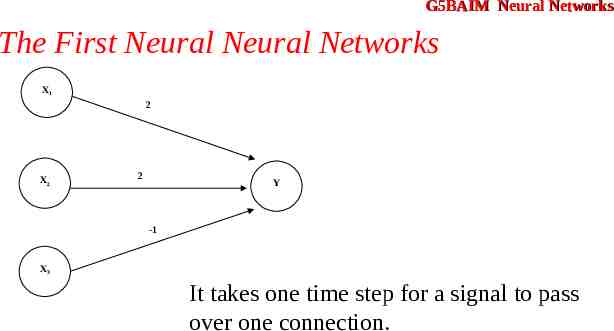

G5BAIM Neural Networks The First Neural Neural Networks X1 2 X2 2 Y -1 X3 Neurons is a McCulloch-Pitts network are connected by directed, weighted paths

G5BAIM Neural Networks The First Neural Neural Networks X1 2 X2 2 Y -1 X3 If the weight on a path is positive the path is excitatory, otherwise it is inhibitory

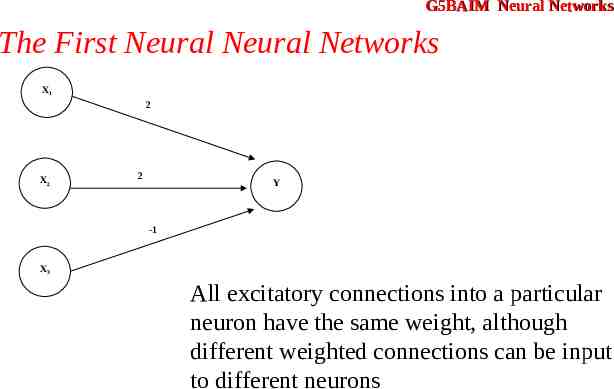

G5BAIM Neural Networks The First Neural Neural Networks X1 2 X2 2 Y -1 X3 All excitatory connections into a particular neuron have the same weight, although different weighted connections can be input to different neurons

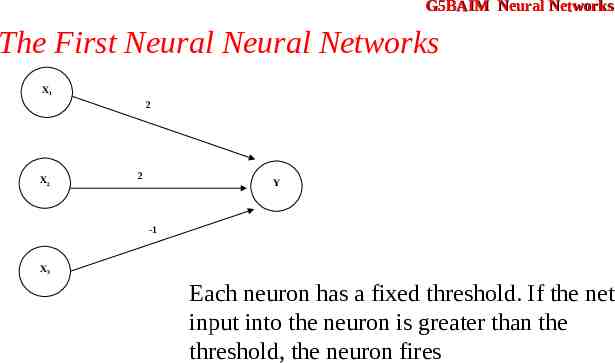

G5BAIM Neural Networks The First Neural Neural Networks X1 2 X2 2 Y -1 X3 Each neuron has a fixed threshold. If the net input into the neuron is greater than the threshold, the neuron fires

G5BAIM Neural Networks The First Neural Neural Networks X1 2 X2 2 Y -1 X3 The threshold is set such that any non-zero inhibitory input will prevent the neuron from firing

G5BAIM Neural Networks The First Neural Neural Networks X1 2 X2 2 Y -1 X3 It takes one time step for a signal to pass over one connection.

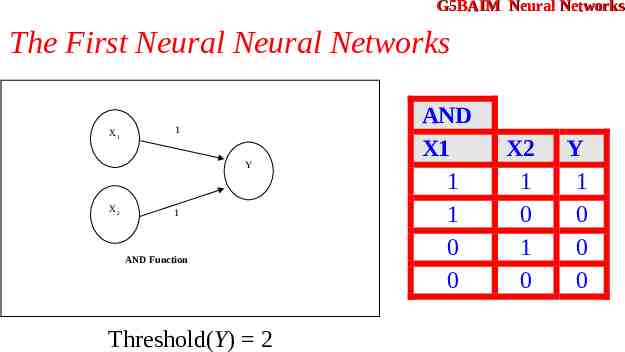

G5BAIM Neural Networks The First Neural Neural Networks X1 1 Y X2 1 AND Function Threshold(Y) 2 AND X1 1 1 0 0 X2 1 0 1 0 Y 1 0 0 0

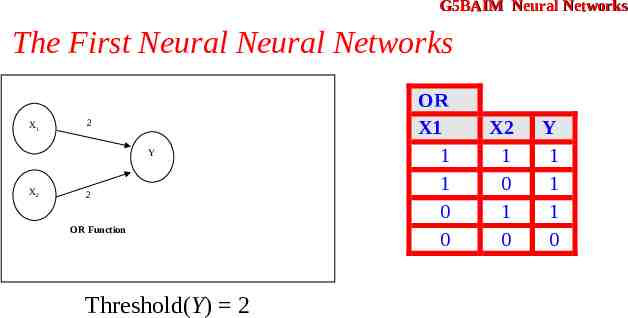

G5BAIM Neural Networks The First Neural Neural Networks X1 2 Y X2 2 AND Function OR Function Threshold(Y) 2 OR X1 1 1 0 0 X2 1 0 1 0 Y 1 1 1 0

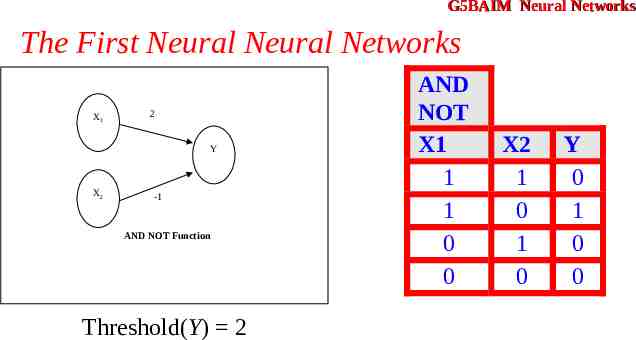

G5BAIM Neural Networks The First Neural Neural Networks X1 2 Y X2 -1 AND NOT Function Threshold(Y) 2 AND NOT X1 1 1 0 0 X2 1 0 1 0 Y 0 1 0 0

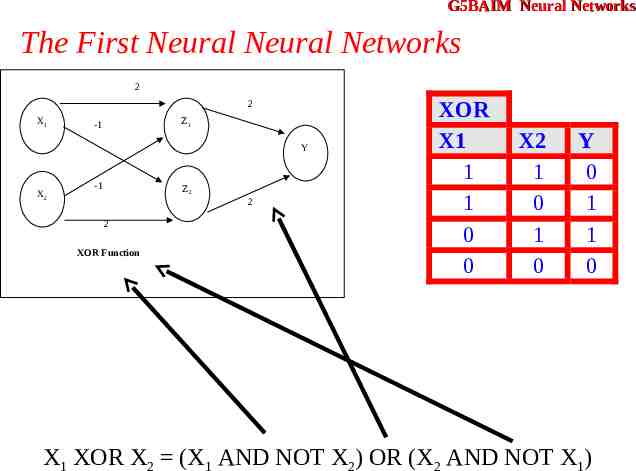

G5BAIM Neural Networks The First Neural Neural Networks 2 2 X1 Z1 -1 Y X2 -1 Z2 2 2 XOR Function XOR X1 1 1 0 0 X2 1 0 1 0 Y 0 1 1 0 X1 XOR X2 (X1 AND NOT X2) OR (X2 AND NOT X1)

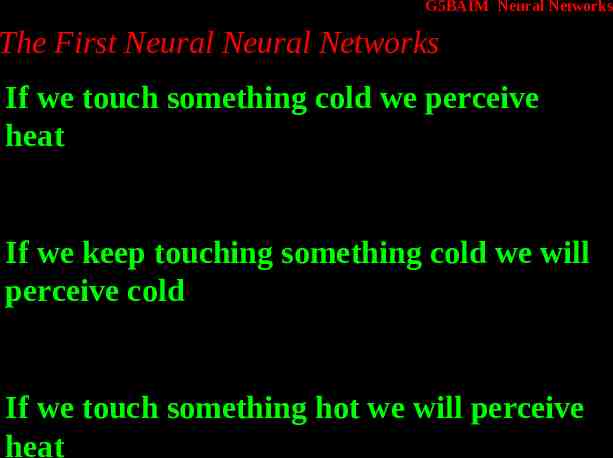

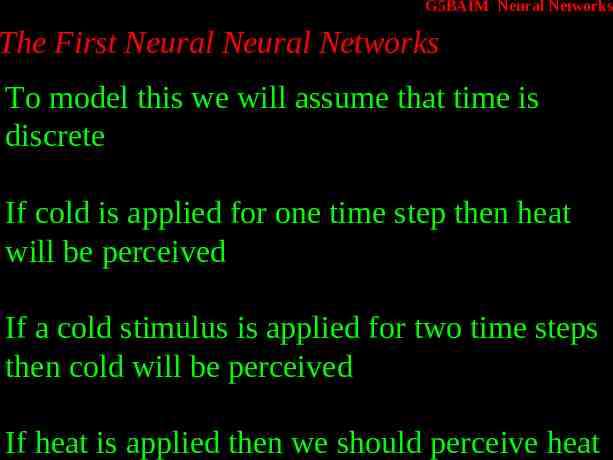

G5BAIM Neural Networks The First Neural Neural Networks If we touch something cold we perceive heat If we keep touching something cold we will perceive cold If we touch something hot we will perceive heat

G5BAIM Neural Networks The First Neural Neural Networks To model this we will assume that time is discrete If cold is applied for one time step then heat will be perceived If a cold stimulus is applied for two time steps then cold will be perceived If heat is applied then we should perceive heat

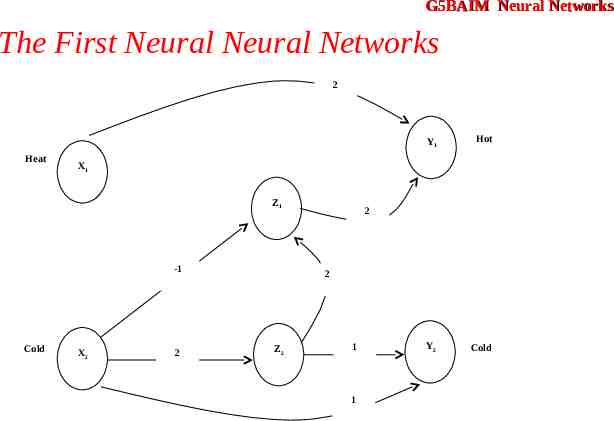

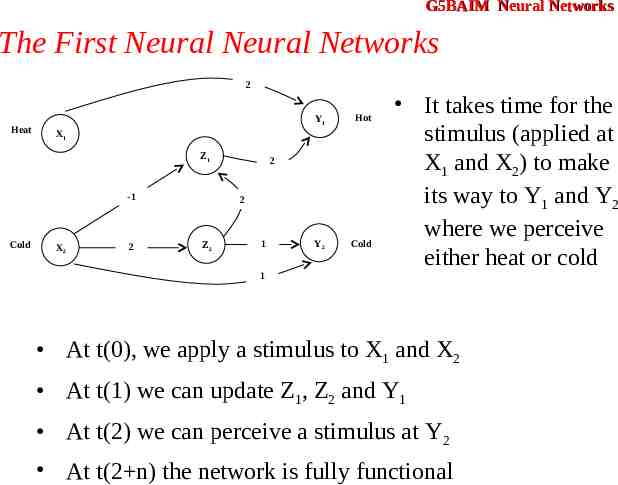

G5BAIM Neural Networks The First Neural Neural Networks 2 Heat Hot Y2 Cold X1 Z1 -1 Cold Y1 X2 2 2 2 Z2 1 1

G5BAIM Neural Networks The First Neural Neural Networks 2 Heat Hot Y2 Cold X1 Z1 -1 Cold Y1 X2 2 2 2 Z2 1 It takes time for the stimulus (applied at X1 and X2) to make its way to Y1 and Y2 where we perceive either heat or cold 1 At t(0), we apply a stimulus to X1 and X2 At t(1) we can update Z1, Z2 and Y1 At t(2) we can perceive a stimulus at Y2 At t(2 n) the network is fully functional

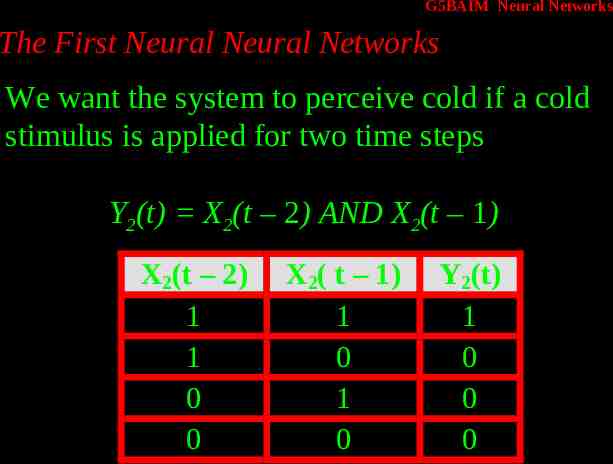

G5BAIM Neural Networks The First Neural Neural Networks We want the system to perceive cold if a cold stimulus is applied for two time steps Y2(t) X2(t – 2) AND X2(t – 1) X2(t – 2) 1 1 0 0 X2( t – 1) 1 0 1 0 Y2(t) 1 0 0 0

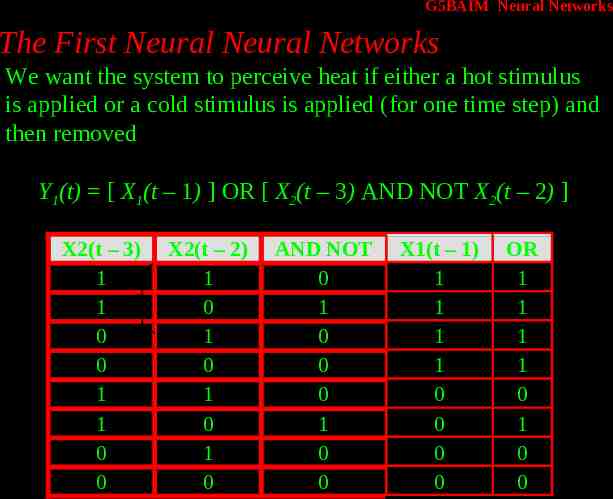

G5BAIM Neural Networks The First Neural Neural Networks We want the system to perceive heat if either a hot stimulus is applied or a cold stimulus is applied (for one time step) and then removed Y1(t) [ X1(t – 1) ] OR [ X2(t – 3) AND NOT X2(t – 2) ] X2(t – 3) 1 1 0 0 1 1 0 0 X2(t – 2) 1 0 1 0 1 0 1 0 AND NOT 0 1 0 0 0 1 0 0 X1(t – 1) 1 1 1 1 0 0 0 0 OR 1 1 1 1 0 1 0 0

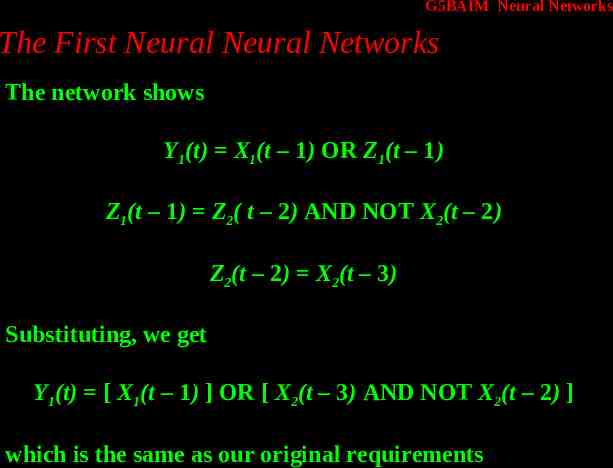

G5BAIM Neural Networks The First Neural Neural Networks The network shows Y1(t) X1(t – 1) OR Z1(t – 1) Z1(t – 1) Z2( t – 2) AND NOT X2(t – 2) Z2(t – 2) X2(t – 3) Substituting, we get Y1(t) [ X1(t – 1) ] OR [ X2(t – 3) AND NOT X2(t – 2) ] which is the same as our original requirements

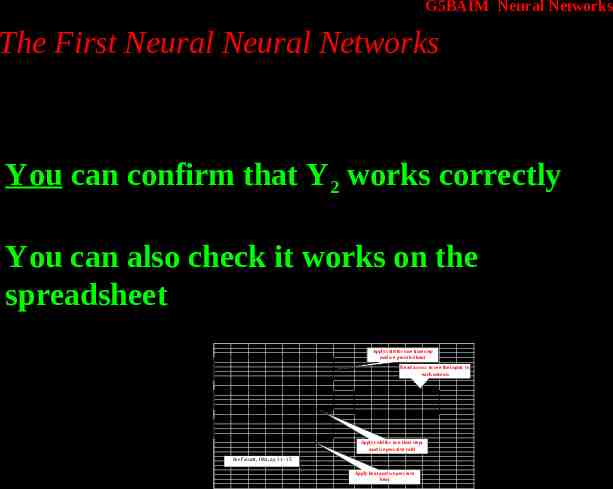

G5BAIM Neural Networks The First Neural Neural Networks You can confirm that Y2 works correctly You can also check it works on the spreadsheet Thre shold 2 Time 0 1 2 3 He at (X1) Cold (X2) 0 1 0 0 0 0 0 0 Z1 Z2 0 1 1 0 Time 0 1 2 He at (X1) Cold (X2) 0 1 0 1 0 0 Z1 Z2 0 0 1 1 Time 0 1 2 He at (X1) Cold (X2) 1 0 1 0 0 0 Z1 Z2 0 0 0 0 Apply cold for one time step and we perceive heat Hot (Y1) Cold (Y2) 0 1 Read across to see the inputs to each neuron 0 0 Hot (Y1) Cold (Y2) 0 1 X1 Z1 Z2 Y1 Y2 X2 -1 2 2 Z1 2 1 Hot (Y1) Cold (Y2) 1 Z2 2 0 Apply cold for two time steps and we perceive cold See Fausett, 1994, pp 31 - 35 Apply heat and we perceive heat 1

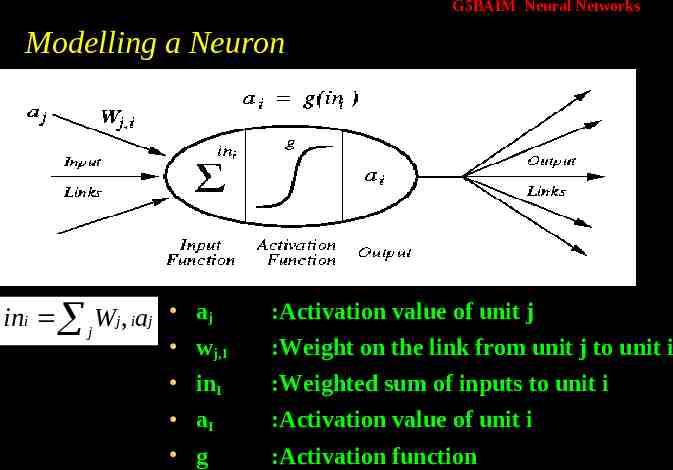

G5BAIM Neural Networks Modelling a Neuron ini j Wj , iaj aj :Activation value of unit j wj,I inI :Weight on the link from unit j to unit i aI g :Activation value of unit i :Weighted sum of inputs to unit i :Activation function

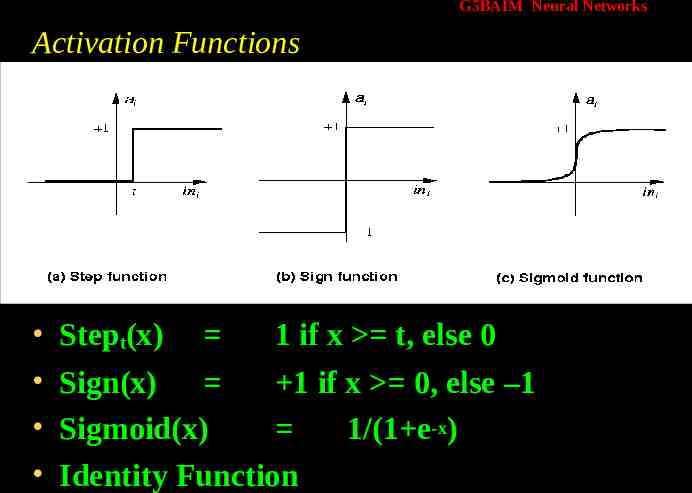

G5BAIM Neural Networks Activation Functions Stept(x) 1 if x t, else 0 Sign(x) 1 if x 0, else –1 Sigmoid(x) 1/(1 e-x) Identity Function

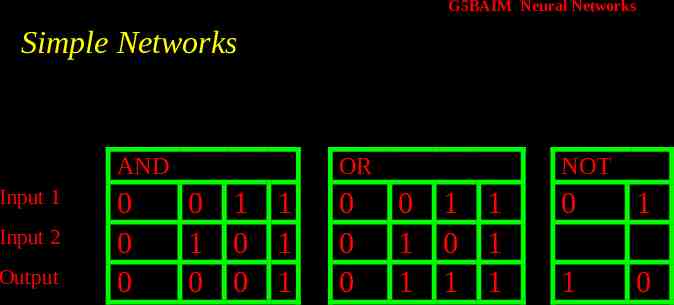

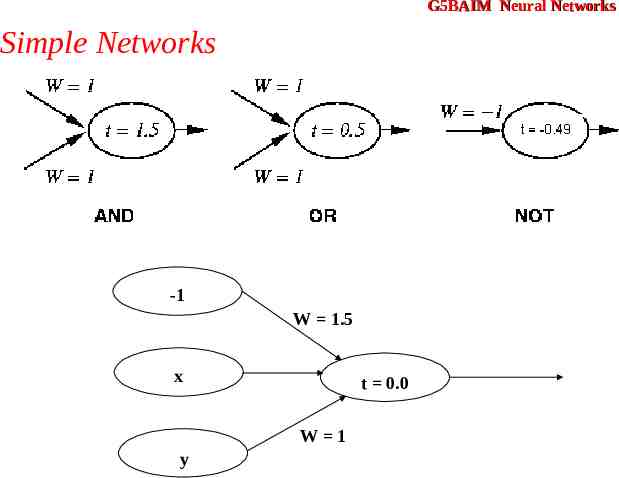

G5BAIM Neural Networks Simple Networks AND Input 1 Input 2 Output 0 0 0 OR 0 1 1 1 0 1 0 0 1 0 0 0 NOT 0 1 1 1 0 1 1 1 1 0 1 1 0

G5BAIM Neural Networks Simple Networks -1 W 1.5 x t 0.0 W 1 y

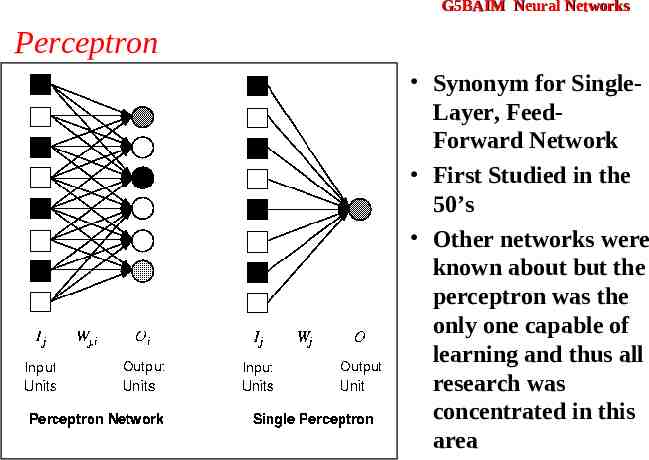

G5BAIM Neural Networks Perceptron Synonym for SingleLayer, FeedForward Network First Studied in the 50’s Other networks were known about but the perceptron was the only one capable of learning and thus all research was concentrated in this area

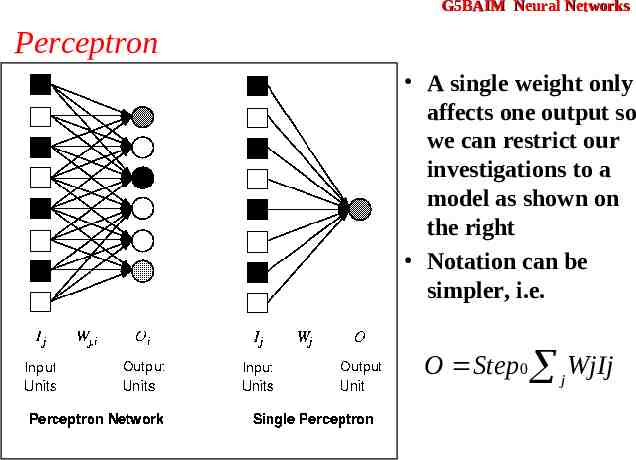

G5BAIM Neural Networks Perceptron A single weight only affects one output so we can restrict our investigations to a model as shown on the right Notation can be simpler, i.e. O Step 0 j WjIj

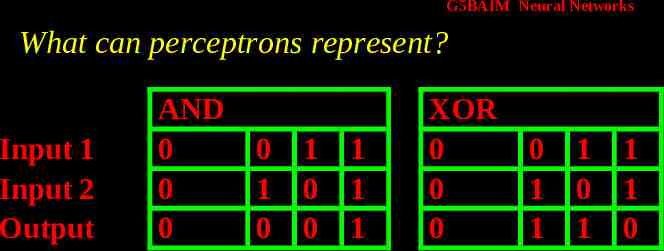

G5BAIM Neural Networks What can perceptrons represent? Input 1 Input 2 Output AND 0 0 0 0 1 0 1 0 0 1 1 1 XOR 0 0 0 0 1 1 1 0 1 1 1 0

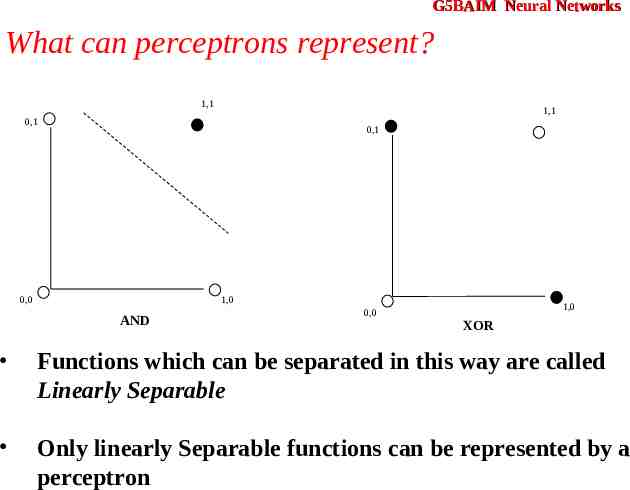

G5BAIM Neural Networks What can perceptrons represent? 1,1 1,1 0,1 0,1 0,0 1,0 AND 0,0 1,0 XOR Functions which can be separated in this way are called Linearly Separable Only linearly Separable functions can be represented by a perceptron

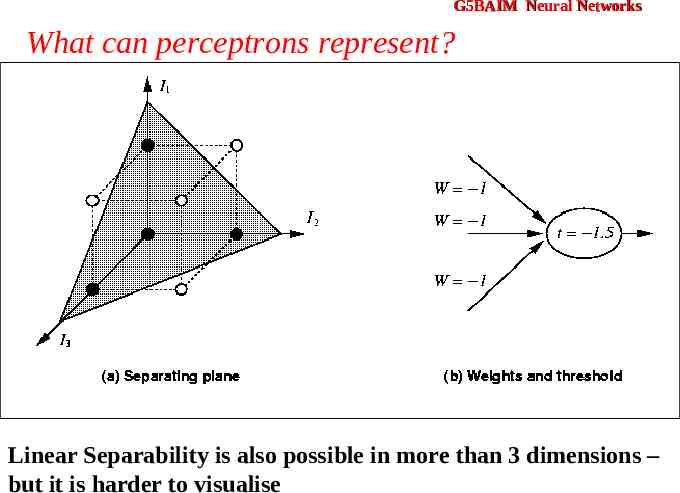

G5BAIM Neural Networks What can perceptrons represent? Linear Separability is also possible in more than 3 dimensions – but it is harder to visualise

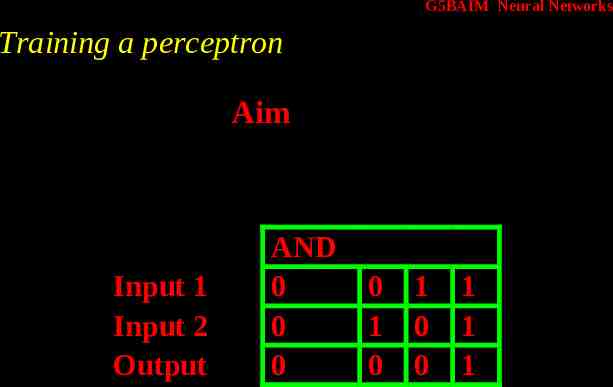

G5BAIM Neural Networks Training a perceptron Aim Input 1 Input 2 Output AND 0 0 0 0 1 0 1 0 0 1 1 1

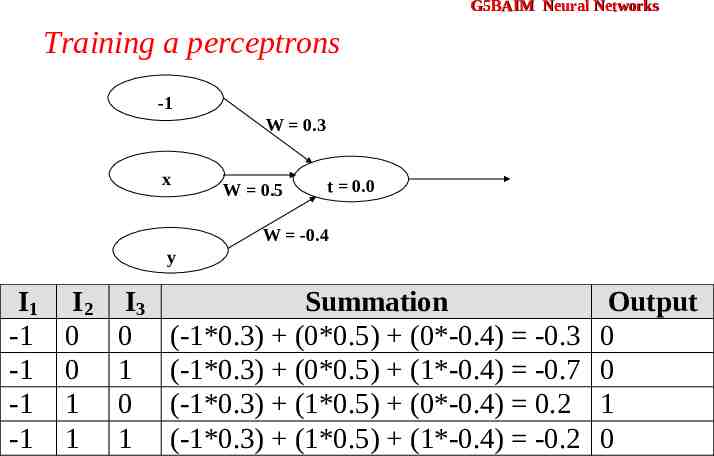

G5BAIM Neural Networks Training a perceptrons -1 W 0.3 x W 0.5 t 0.0 W -0.4 y I1 -1 -1 -1 -1 I2 0 0 1 1 I3 0 1 0 1 Summation (-1*0.3) (0*0.5) (0*-0.4) -0.3 (-1*0.3) (0*0.5) (1*-0.4) -0.7 (-1*0.3) (1*0.5) (0*-0.4) 0.2 (-1*0.3) (1*0.5) (1*-0.4) -0.2 Output 0 0 1 0

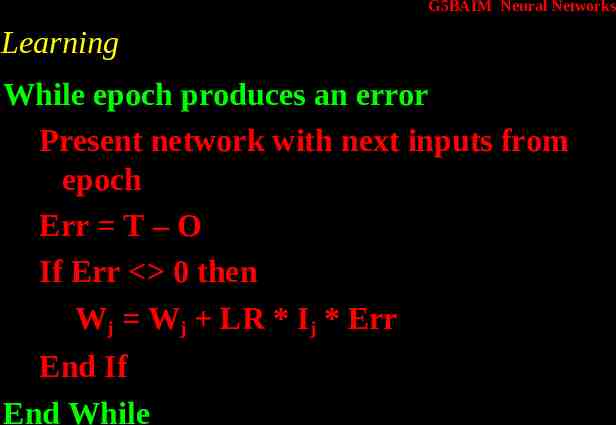

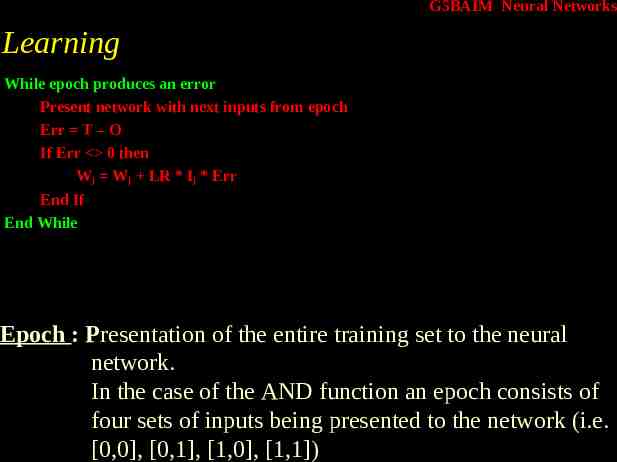

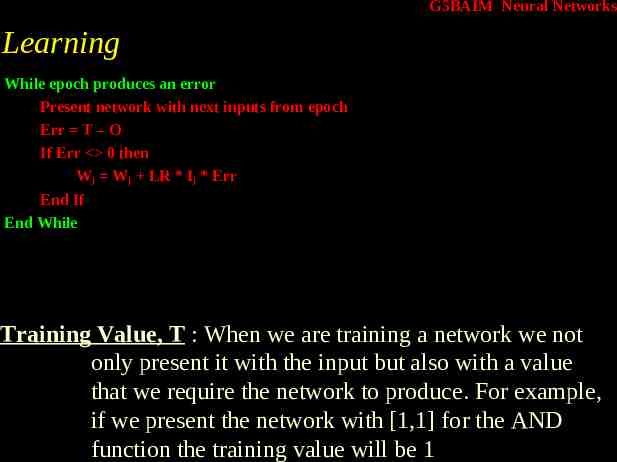

G5BAIM Neural Networks Learning While epoch produces an error Present network with next inputs from epoch Err T – O If Err 0 then Wj Wj LR * Ij * Err End If End While

G5BAIM Neural Networks Learning While epoch produces an error Present network with next inputs from epoch Err T – O If Err 0 then Wj Wj LR * Ij * Err End If End While Epoch : Presentation of the entire training set to the neural network. In the case of the AND function an epoch consists of four sets of inputs being presented to the network (i.e. [0,0], [0,1], [1,0], [1,1])

G5BAIM Neural Networks Learning While epoch produces an error Present network with next inputs from epoch Err T – O If Err 0 then Wj Wj LR * Ij * Err End If End While Training Value, T : When we are training a network we not only present it with the input but also with a value that we require the network to produce. For example, if we present the network with [1,1] for the AND function the training value will be 1

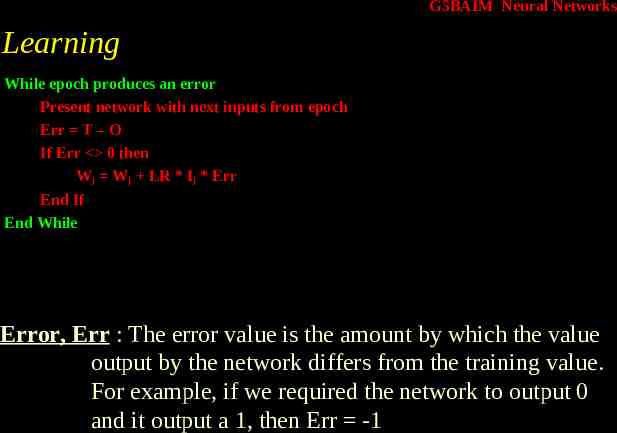

G5BAIM Neural Networks Learning While epoch produces an error Present network with next inputs from epoch Err T – O If Err 0 then Wj Wj LR * Ij * Err End If End While Error, Err : The error value is the amount by which the value output by the network differs from the training value. For example, if we required the network to output 0 and it output a 1, then Err -1

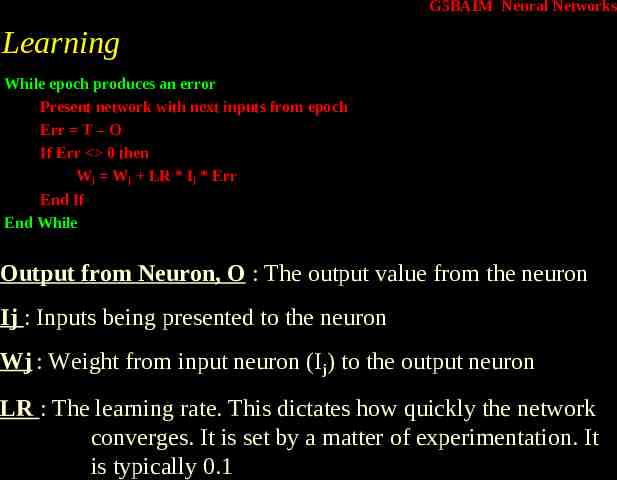

G5BAIM Neural Networks Learning While epoch produces an error Present network with next inputs from epoch Err T – O If Err 0 then Wj Wj LR * Ij * Err End If End While Output from Neuron, O : The output value from the neuron Ij : Inputs being presented to the neuron Wj : Weight from input neuron (Ij) to the output neuron LR : The learning rate. This dictates how quickly the network converges. It is set by a matter of experimentation. It is typically 0.1

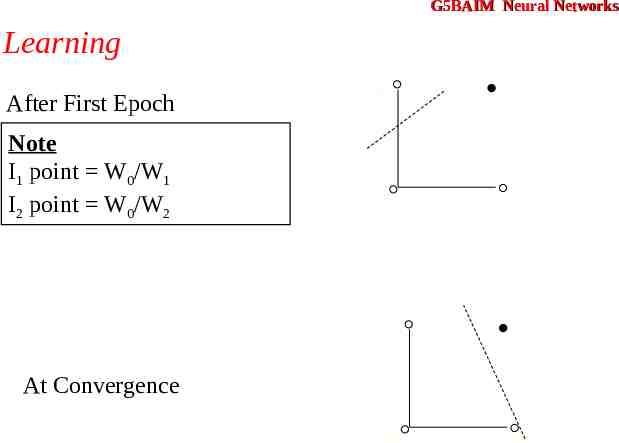

G5BAIM Neural Networks Learning I1 1,1 After First Epoch Note I1 point W0/W1 I2 point W0/W2 0,1 0,0 1,0 I2 I1 1,1 0,1 At Convergence 0,0 1,0 I2

G5BAIM Artificial Intelligence Methods Graham Kendall End of Neural Networks