Map-Reduce With Hadoop

94 Slides3.66 MB

Map-Reduce With Hadoop

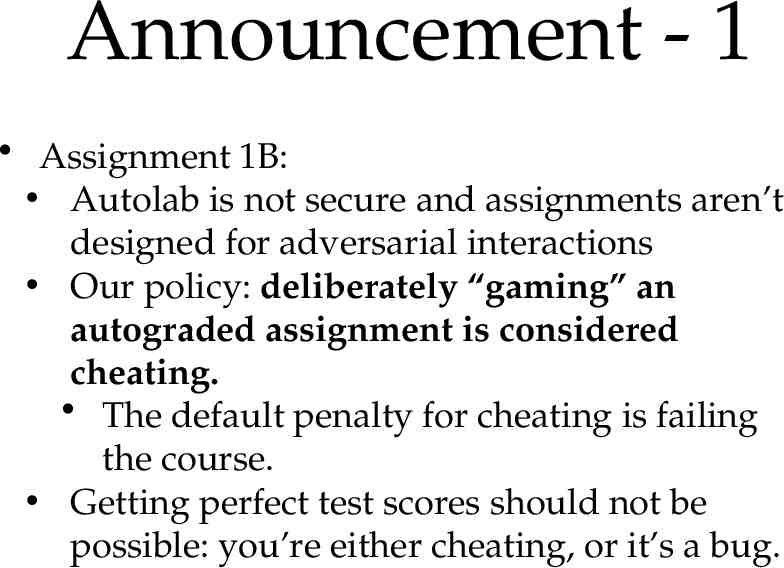

Announcement - 1 Assignment 1B: Autolab is not secure and assignments aren’t designed for adversarial interactions Our policy: deliberately “gaming” an autograded assignment is considered cheating. The default penalty for cheating is failing the course. Getting perfect test scores should not be possible: you’re either cheating, or it’s a bug.

Announcement - 2 Paper presentations: 3/3 and 3/5 Projects: see “project info” on wiki 1-2 page writeup of your idea: 2/17 Response to my feedback: 3/5 Option for 605 students to collaborate: Proposals will be posted; proposers can advertise slots for collaborators, who can be 605 students (1-2 per project max) “Pay”: 1 less assignment, no exam

Today: from stream sort to hadoop Looked at algorithms consisting of Sorting (to organize messages) Streaming (low-memory, line-by-line) file transformations (“map” operations) Streaming “reduce” operations, like summing counts, that input files sorted by keys and operate on contiguous runs of lines with the same keys Our algorithms could be expressed as sequences of map-sort-reduce triples (allowing identity maps and reduces) operating on sequences of key-value pairs To parallelize we can look at parallelizing these

Today: from stream sort to hadoop Important point: Our code is not CPU-bound It’s I/O bound To speed it up, we need to add more disk drives, not more CPUs. Example: finding a particular line in 1 TB of data Our algorithms could be expressed as sequences of map-sort-reduce triples (allowing identity maps and reduces) operating on sequences of key-value pairs To parallelize we can look at parallelizing these

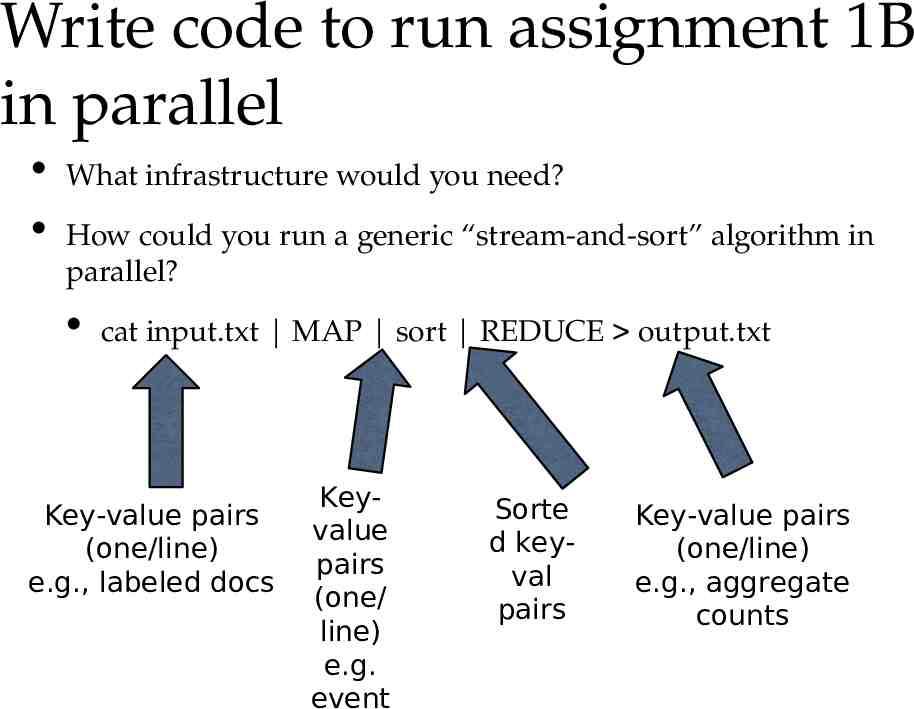

Write code to run assignment 1B in parallel What infrastructure would you need? How could you run a generic “stream-and-sort” algorithm in parallel? cat input.txt MAP sort REDUCE output.txt Key-value pairs (one/line) e.g., labeled docs Keyvalue pairs (one/ line) e.g. event Sorte d keyval pairs Key-value pairs (one/line) e.g., aggregate counts

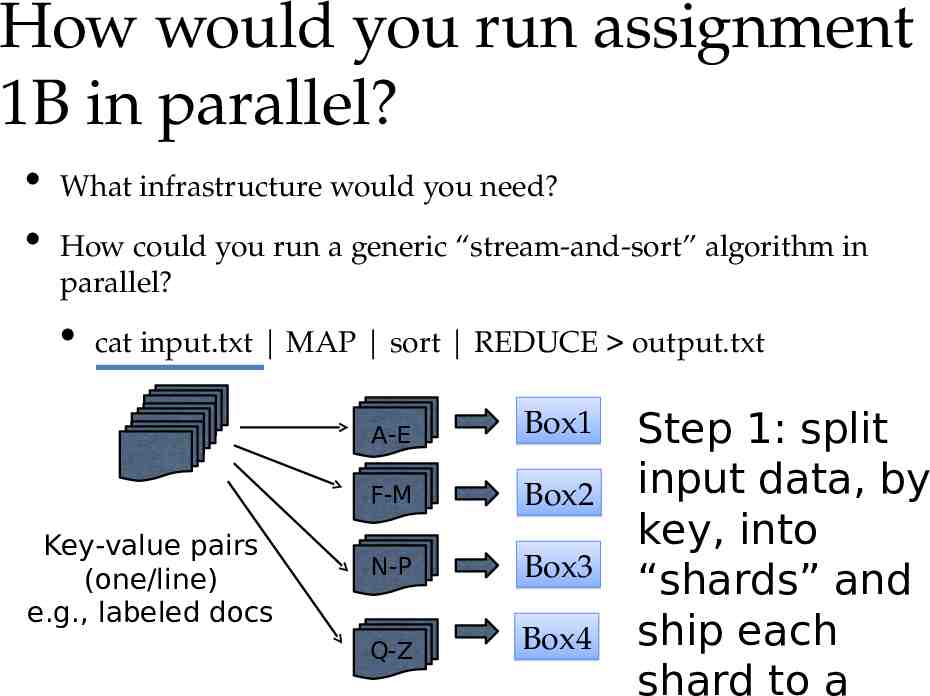

How would you run assignment 1B in parallel? What infrastructure would you need? How could you run a generic “stream-and-sort” algorithm in parallel? cat input.txt MAP sort REDUCE output.txt Key-value pairs (one/line) e.g., labeled docs A-E Box1 F-M Box2 N-P Box3 Q-Z Box4 Step 1: split input data, by key, into “shards” and ship each shard to a

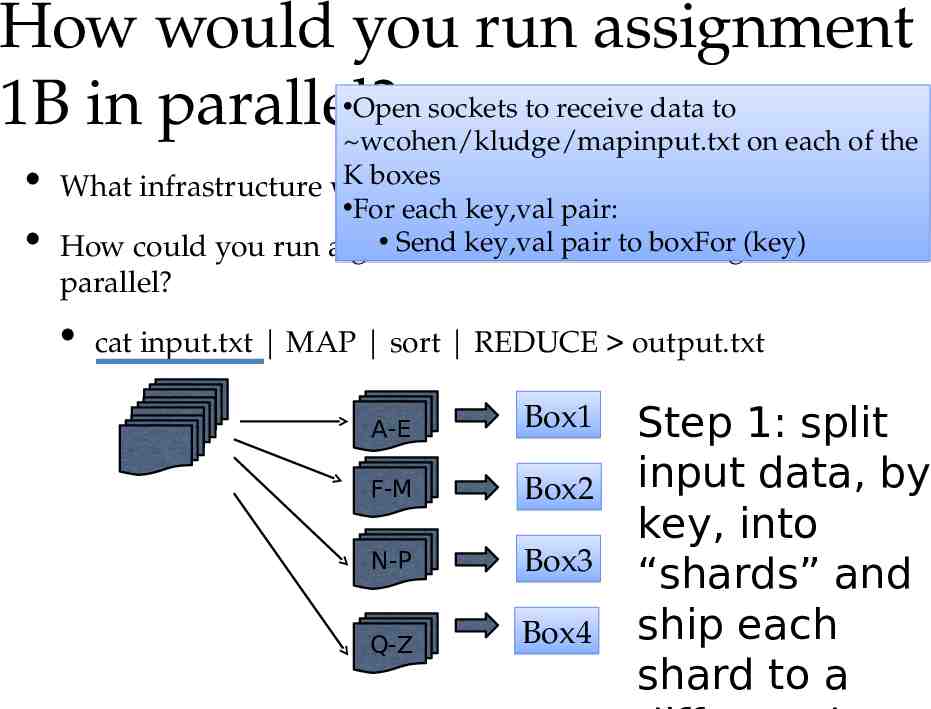

How would you run assignment Open sockets to receive data to 1B in parallel? wcohen/kludge/mapinput.txt on each of the K boxes What infrastructure would you need? For each key,val pair: Send“stream-and-sort” key,val pair to boxFor (key) in How could you run a generic algorithm parallel? cat input.txt MAP sort REDUCE output.txt A-E Box1 F-M Box2 N-P Box3 Q-Z Box4 Step 1: split input data, by key, into “shards” and ship each shard to a

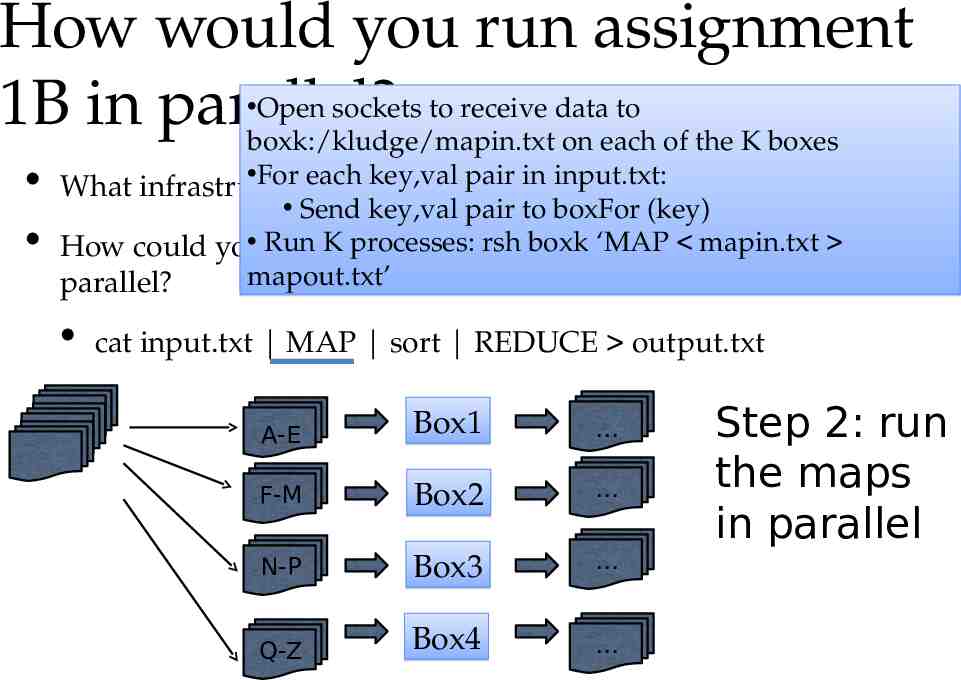

How would you run assignment Open sockets to receive data to 1B in parallel? boxk:/kludge/mapin.txt on each of the K boxes For each key,val in input.txt: What infrastructure would youpair need? Send key,val pair to boxFor (key) Run rsh boxk ‘MAP mapin.txt How could you run Ka processes: generic “stream-and-sort” algorithm in mapout.txt’ parallel? cat input.txt MAP sort REDUCE output.txt A-E Box1 F-M Box2 N-P Box3 Q-Z Box4 Step 2: run the maps in parallel

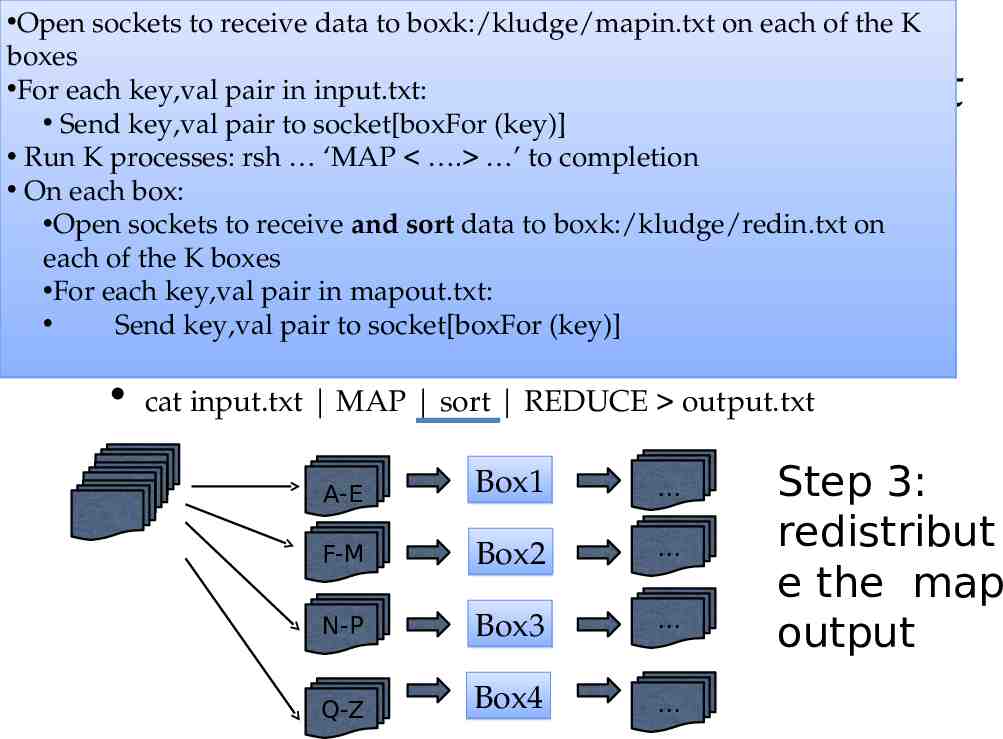

Open sockets to receive data to boxk:/kludge/mapin.txt on each of the K boxes For each key,val pair in input.txt: Send key,val pair to socket[boxFor (key)] Run K processes: rsh ‘MAP . ’ to completion On each box: Open sockets to receive and sort data to boxk:/kludge/redin.txt on What infrastructure would you need? each of the K boxes For each key,val pair in mapout.txt: How could you run a generic “stream-and-sort” algorithm in Send key,val pair to socket[boxFor (key)] How would you run assignment 1B in parallel? parallel? cat input.txt MAP sort REDUCE output.txt A-E Box1 F-M Box2 N-P Box3 Q-Z Box4 Step 3: redistribut e the map output

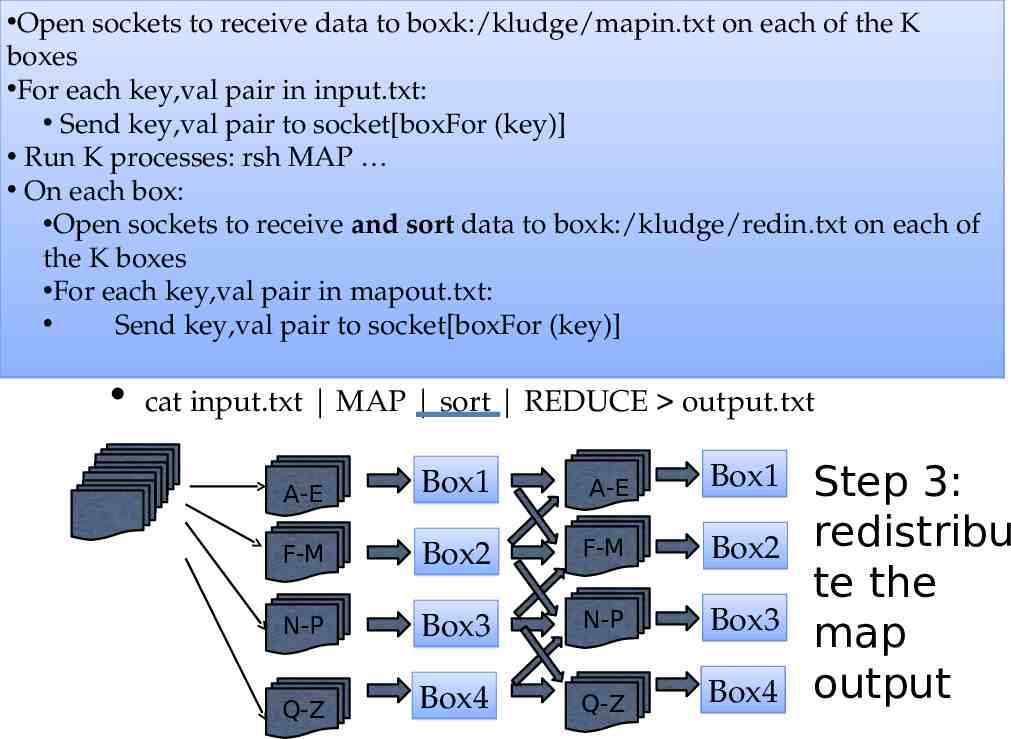

Open sockets to receive data to boxk:/kludge/mapin.txt on each of the K boxes For each key,val pair in input.txt: Send key,val pair to socket[boxFor (key)] Run K processes: rsh MAP On each box: Open sockets to receive and sort data to boxk:/kludge/redin.txt on each of boxes infrastructure would you need? the KWhat For each key,val pair in mapout.txt: How could you run a generic “stream-and-sort” algorithm in Send key,val pair to socket[boxFor (key)] How would you run assignment 1B in parallel? parallel? cat input.txt MAP sort REDUCE output.txt A-E Box1 A-E Box1 F-M Box2 F-M Box2 N-P Box3 N-P Box3 Q-Z Box4 Q-Z Box4 Step 3: redistribu te the map output

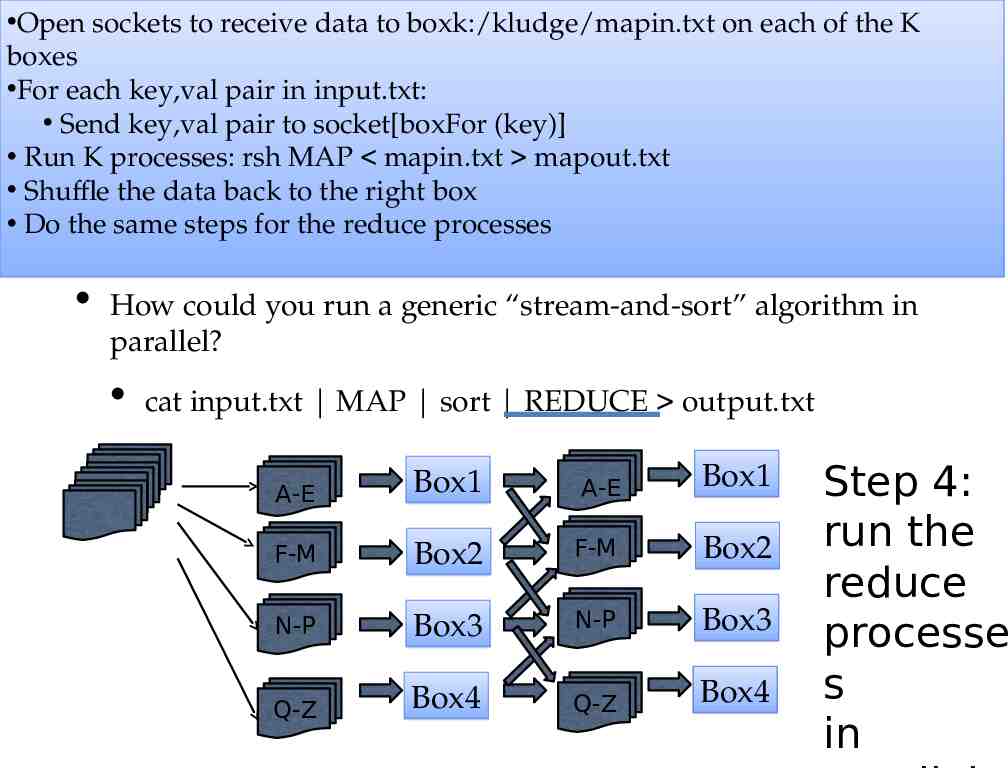

Open sockets to receive data to boxk:/kludge/mapin.txt on each of the K boxes For each key,val pair in input.txt: Send key,val pair to socket[boxFor (key)] Run K processes: rsh MAP mapin.txt mapout.txt Shuffle the data back to the right box Do the same steps for the reduce processes How would you run assignment 1B in parallel? What infrastructure would you need? How could you run a generic “stream-and-sort” algorithm in parallel? cat input.txt MAP sort REDUCE output.txt A-E Box1 A-E Box1 F-M Box2 F-M Box2 N-P Box3 N-P Box3 Q-Z Box4 Q-Z Box4 Step 4: run the reduce processe s in

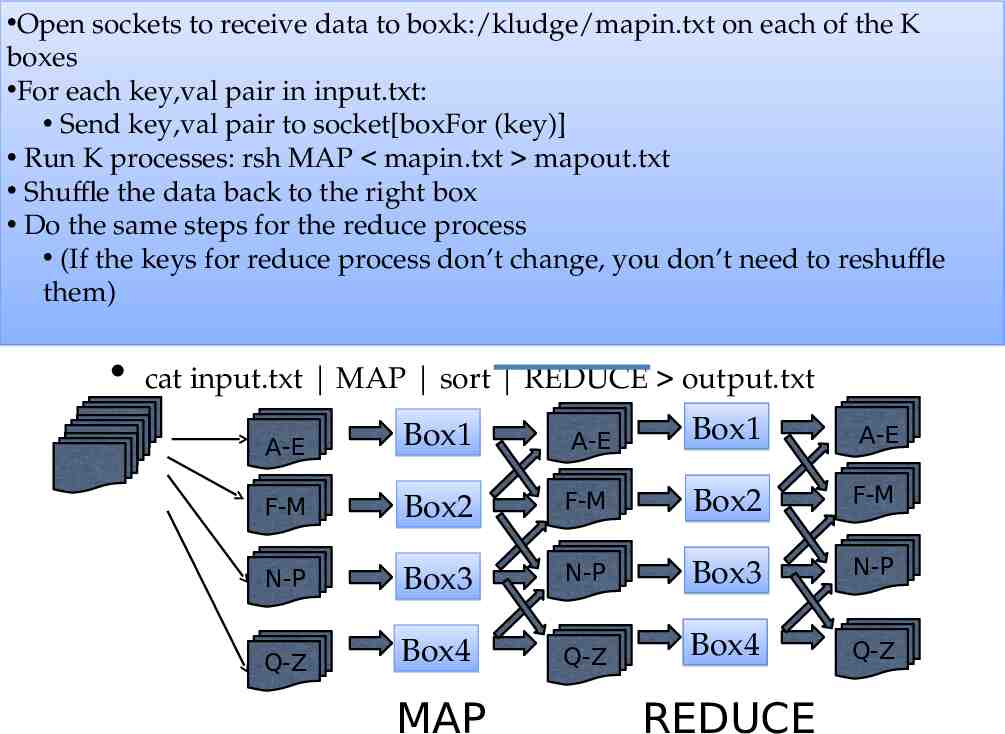

Open sockets to receive data to boxk:/kludge/mapin.txt on each of the K boxes For each key,val pair in input.txt: Send key,val pair to socket[boxFor (key)] Run K processes: rsh MAP mapin.txt mapout.txt Shuffle the data back to the right box What infrastructure would you need? Do the same steps for the reduce process (If the keys for reduce process don’t change, you don’t need to reshuffle How could you run a generic “stream-and-sort” algorithm in them) How would you run assignment 1B in parallel? parallel? cat input.txt MAP sort REDUCE output.txt A-E Box1 A-E Box1 A-E F-M Box2 F-M Box2 F-M N-P Box3 N-P Box3 N-P Q-Z Box4 Q-Z Box4 Q-Z MAP REDUCE

1. This would be pretty systems-y (remote copy files, waiting for remote processes, ) 2. It would take work to make it useful .

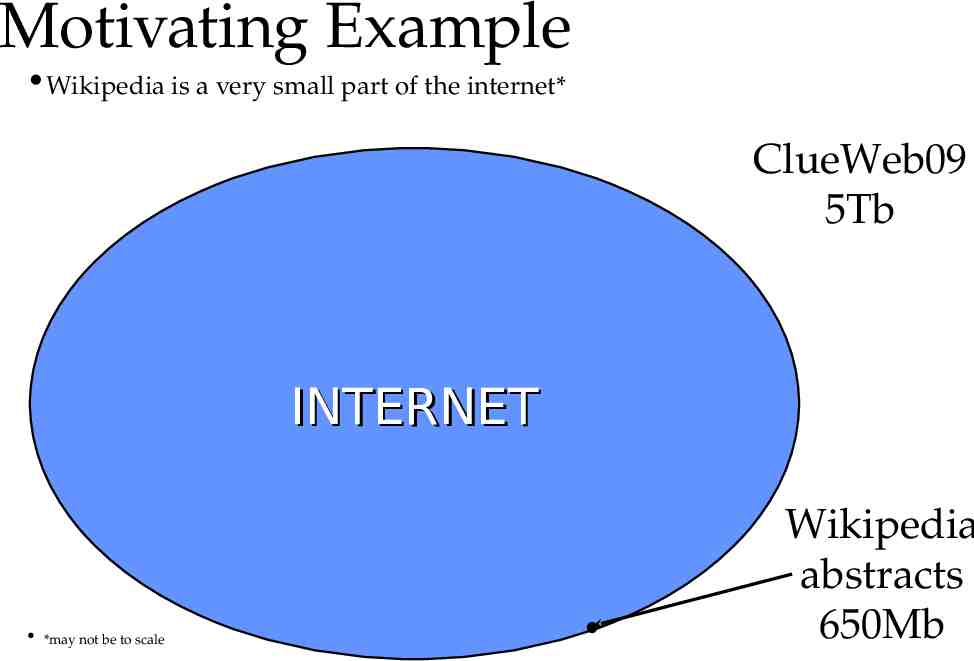

Motivating Example Wikipedia is a very small part of the internet* ClueWeb09 5Tb INTERNET *may not be to scale Wikipedia abstracts 650Mb

1. This would be pretty systems-y (remote copy files, waiting for remote processes, ) 2. It would take work to make run for 500 jobs Reliability: Replication, restarts, monitoring jobs, Efficiency: loadbalancing, reducing file/network i/o, optimizing

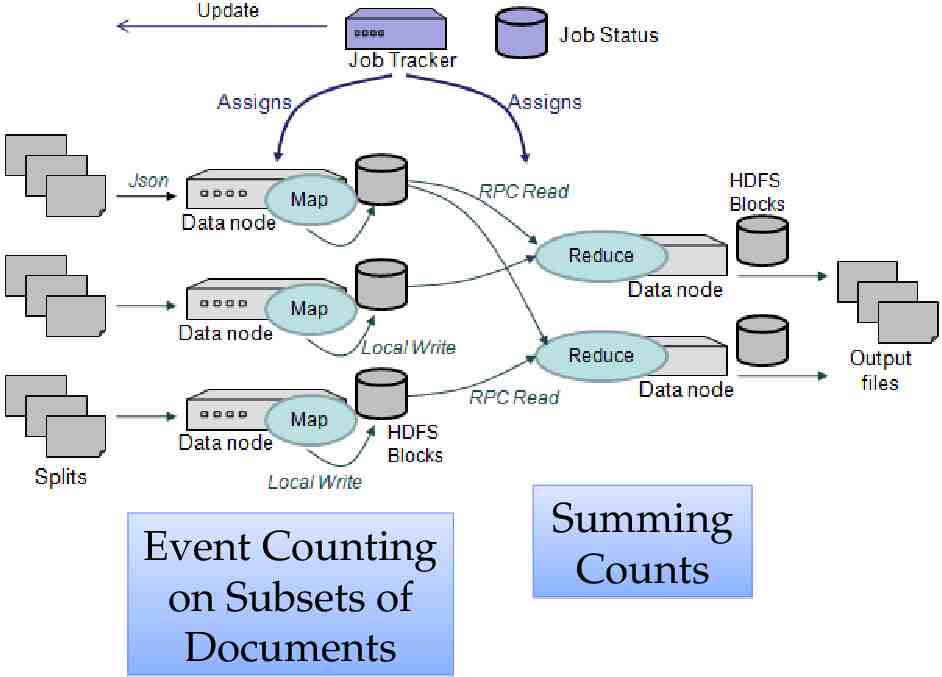

Event Counting on Subsets of Documents Summing Counts

1. This would be pretty systems-y (remote copy files, waiting for remote processes, ) 2. It would take work to make run for 500 jobs Reliability: Replication, restarts, monitoring jobs, Efficiency: loadbalancing, reducing file/network i/o, optimizing

Parallel and Distributed Computing: MapReduce pilfered from: Alona Fyshe

Inspiration not Plagiarism This is not the first lecture ever on Mapreduce I borrowed from Alona Fyshe and she borrowed from: Jimmy Lin http://www.umiacs.umd.edu/ jimmylin/cloud-computing/SIGIR-2009/Lin-MapReduce-SIGIR2009.pd f Google http://code.google.com/edu/submissions/mapreduce-minilecture/listing.html http://code.google.com/edu/submissions/mapreduce/listing.html Cloudera http://vimeo.com/3584536

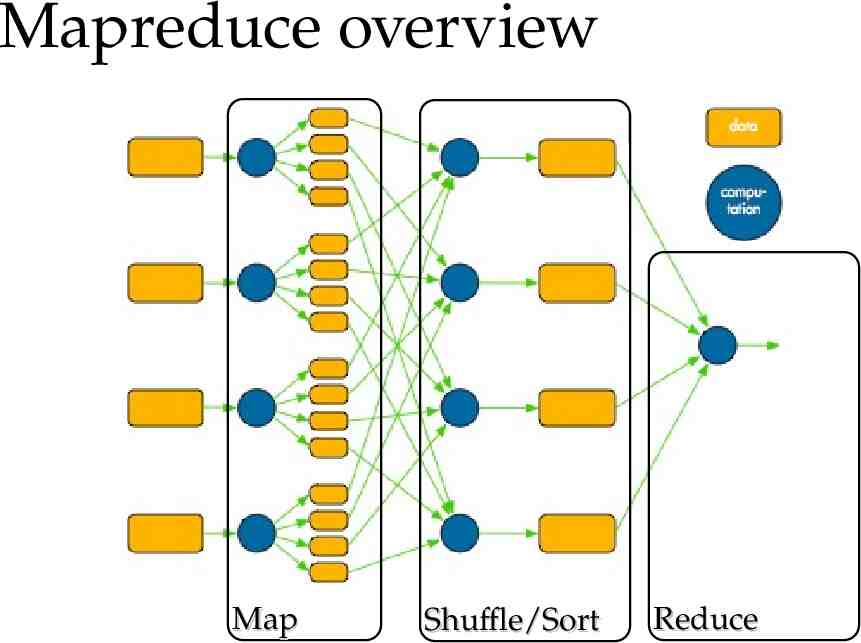

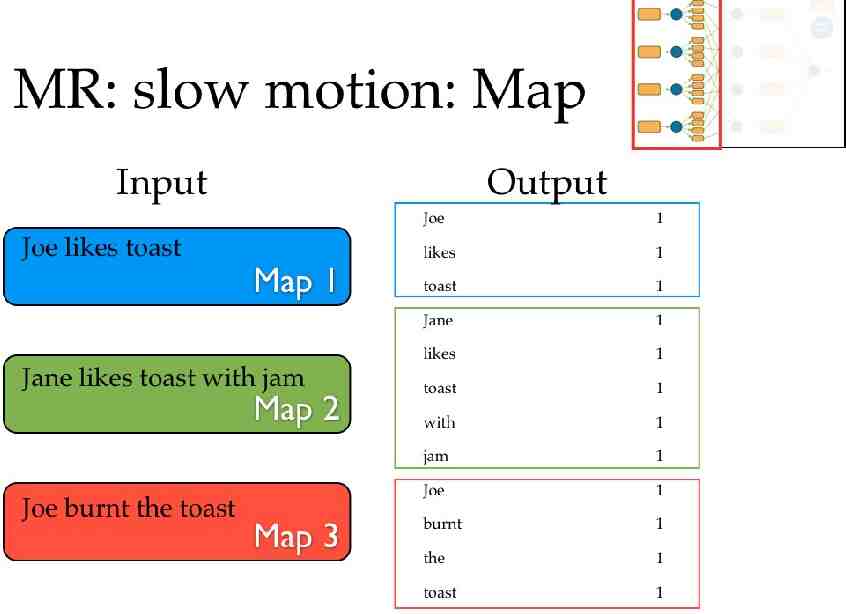

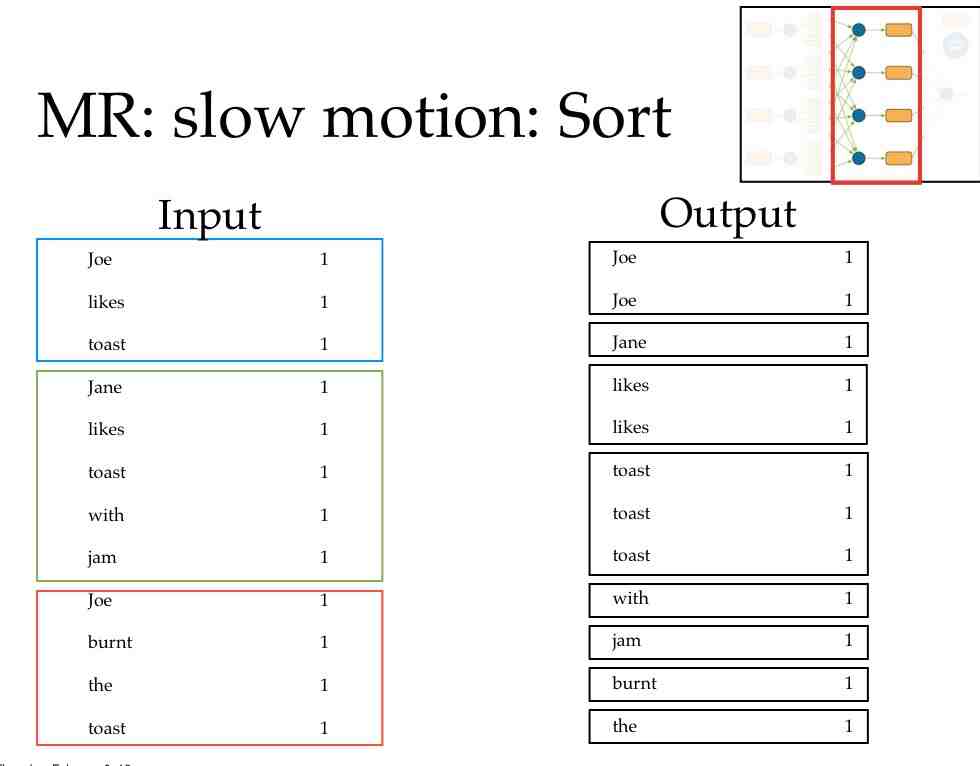

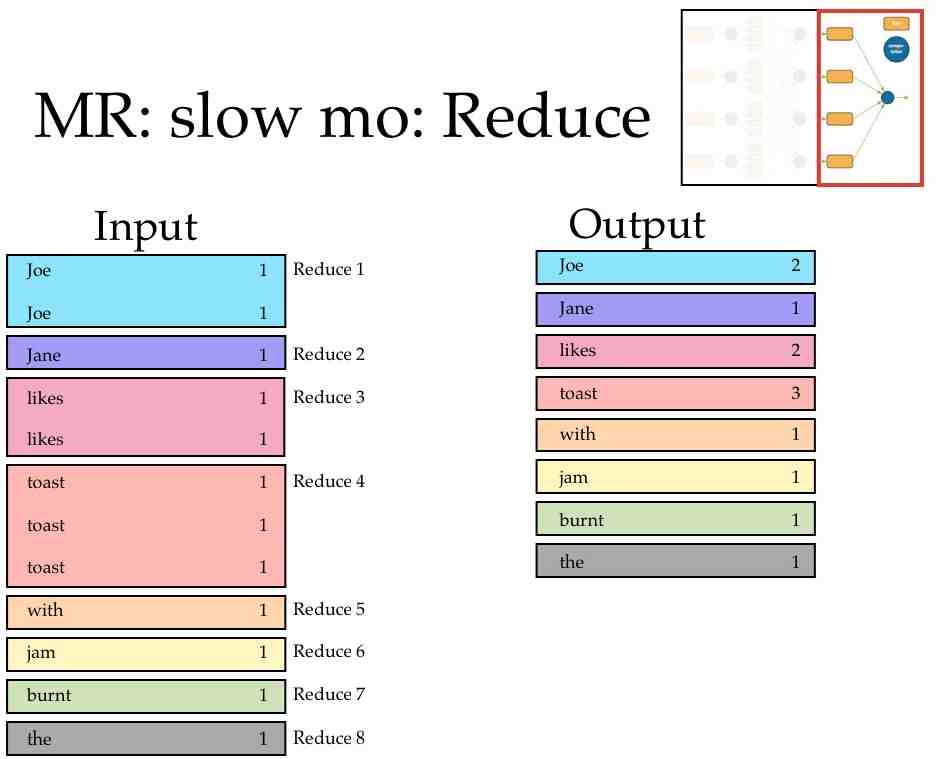

Surprise, you mapreduced! Mapreduce has three main phases Map (send each input record to a key) Sort (put all of one key in the same place) handled behind the scenes Reduce (operate on each key and its set of values) Terms come from functional programming: map(lambda x:x.upper(),["william","w","cohen"]) ['WILLIAM', 'W', 'COHEN'] reduce(lambda x,y:x "-" y,["william","w","cohen"]) ”william-wcohen”

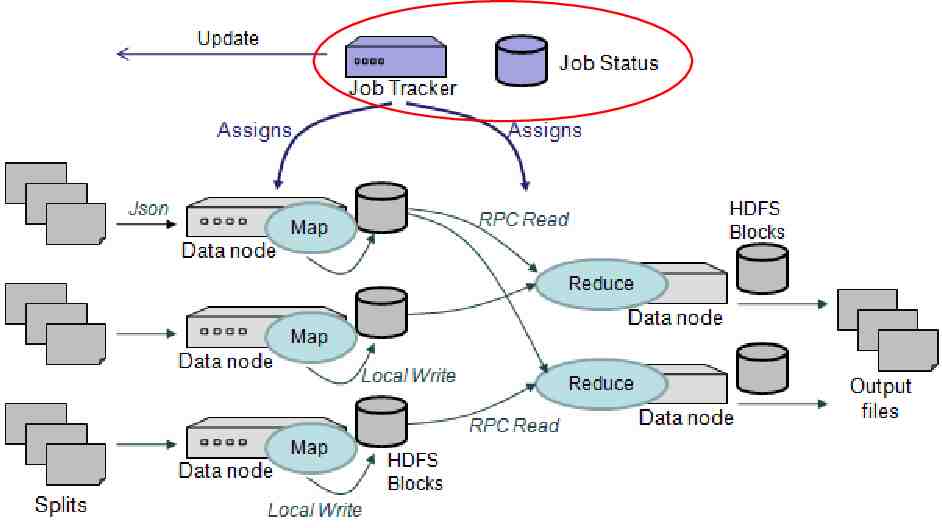

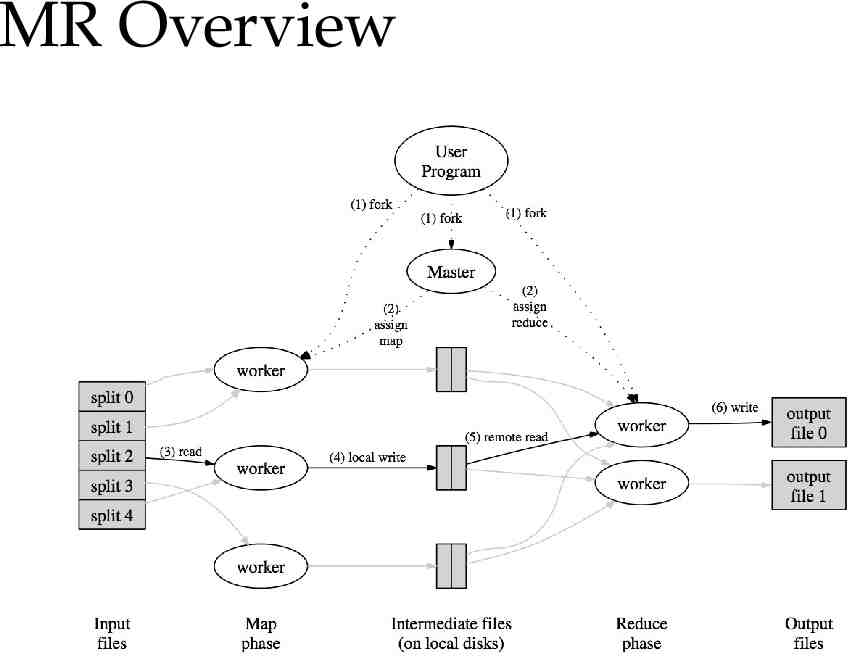

Mapreduce overview Map Shuffle/Sort Reduce

Distributing NB Questions: How will you know when each machine is done? Communication overhead How will you know if a machine is dead?

Failure How big of a deal is it really? A huge deal. In a distributed environment disks fail ALL THE TIME. Large scale systems must assume that any process can fail at any time. It may be much cheaper to make the software run reliably on unreliable hardware than to make the hardware reliable. Ken Arnold (Sun, CORBA designer): Failure is the defining difference between distributed and local programming, so you have to design distributed systems with the expectation of failure. Imagine asking people, "If the probability of something happening is one in 1013, how often would it happen?" Common sense would be to answer, "Never." That is an infinitely large number in human terms. But if you ask a physicist, she would say, "All the time. In a cubic foot of air, those things happen all the time.”

Well, that’s a pain What will you do when a task fails?

Well, that’s a pain What’s the difference between slow and dead? Who cares? Start a backup process. If the process is slow because of machine issues, the backup may finish first If it’s slow because you poorly partitioned your data. waiting is your punishment

What else is a pain? Losing your work! If a disk fails you can lose some intermediate output Ignoring the missing data could give you wrong answers Who cares? if I’m going to run backup processes I might as well have backup copies of the intermediate data also

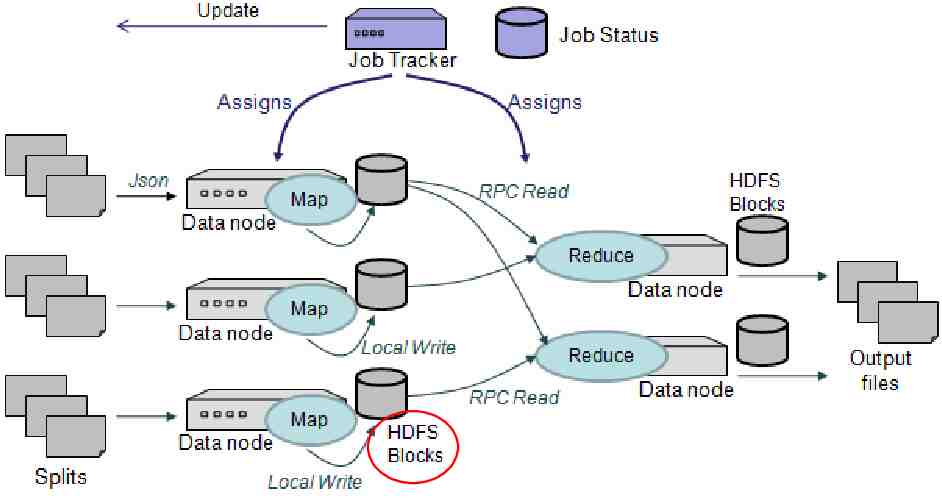

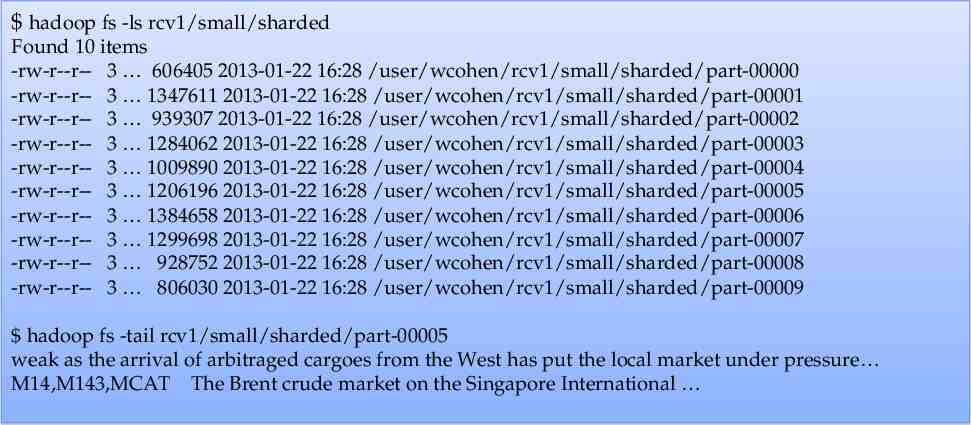

HDFS: The Hadoop File System Distributes data across the cluster distributed file looks like a directory with shards as files inside it makes an effort to run processes locally with the data Replicates data default 3 copies of each file Optimized for streaming really really big “blocks”

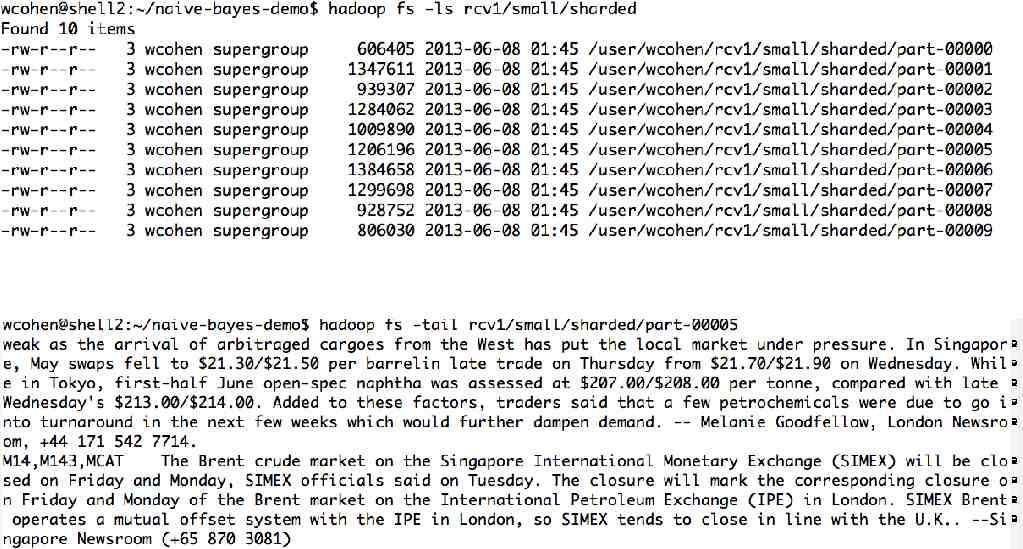

hadoop fs -ls rcv1/small/sharded Found 10 items -rw-r--r-- 3 606405 2013-01-22 16:28 /user/wcohen/rcv1/small/sharded/part-00000 -rw-r--r-- 3 1347611 2013-01-22 16:28 /user/wcohen/rcv1/small/sharded/part-00001 -rw-r--r-- 3 939307 2013-01-22 16:28 /user/wcohen/rcv1/small/sharded/part-00002 -rw-r--r-- 3 1284062 2013-01-22 16:28 /user/wcohen/rcv1/small/sharded/part-00003 -rw-r--r-- 3 1009890 2013-01-22 16:28 /user/wcohen/rcv1/small/sharded/part-00004 -rw-r--r-- 3 1206196 2013-01-22 16:28 /user/wcohen/rcv1/small/sharded/part-00005 -rw-r--r-- 3 1384658 2013-01-22 16:28 /user/wcohen/rcv1/small/sharded/part-00006 -rw-r--r-- 3 1299698 2013-01-22 16:28 /user/wcohen/rcv1/small/sharded/part-00007 -rw-r--r-- 3 928752 2013-01-22 16:28 /user/wcohen/rcv1/small/sharded/part-00008 -rw-r--r-- 3 806030 2013-01-22 16:28 /user/wcohen/rcv1/small/sharded/part-00009 hadoop fs -tail rcv1/small/sharded/part-00005 weak as the arrival of arbitraged cargoes from the West has put the local market under pressure M14,M143,MCAT The Brent crude market on the Singapore International

MR Overview

1. This would be pretty systems-y (remote copy files, waiting for remote processes, ) 2. It would take work to make work for 500 jobs Reliability: Replication, restarts, monitoring jobs, Efficiency: loadbalancing, reducing file/network i/o, optimizing

Map reduce with Hadoop streaming

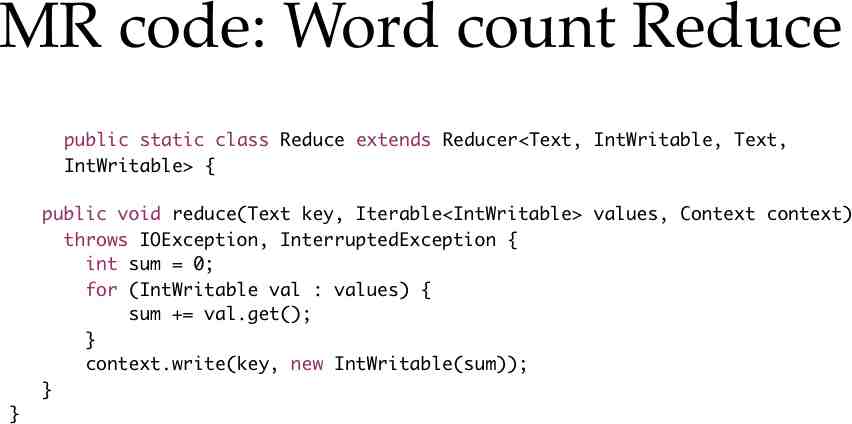

Breaking this down What actually is a key-value pair? How do you interface with Hadoop? One very simple way: Hadoop’s streaming interface. Mapper outputs key-value pairs as: One pair per line, key and value tab-separated Reduced reads in data in the same format Lines are sorted so lines with the same key are adjacent.

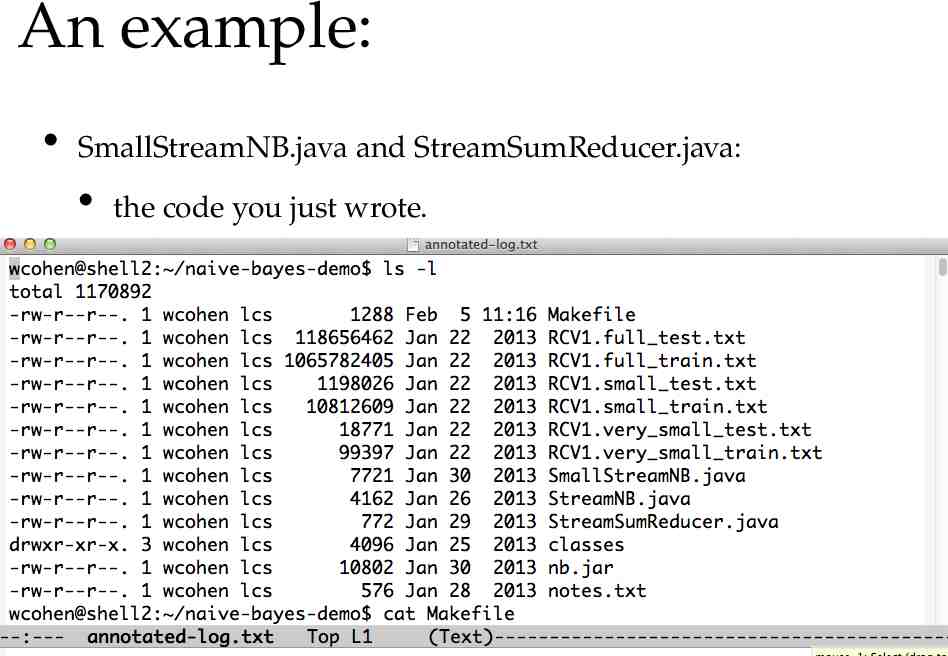

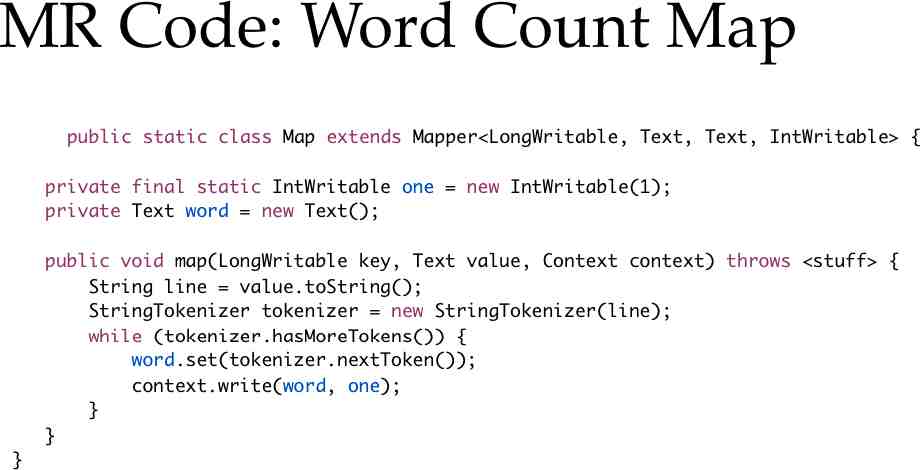

An example: SmallStreamNB.java and StreamSumReducer.java: the code you just wrote.

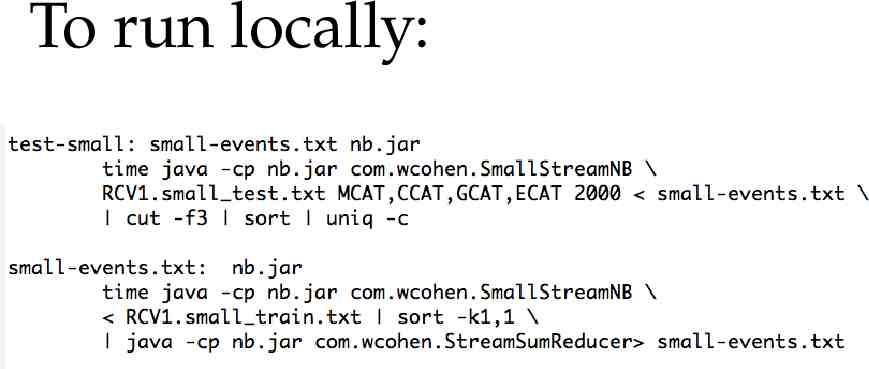

To run locally:

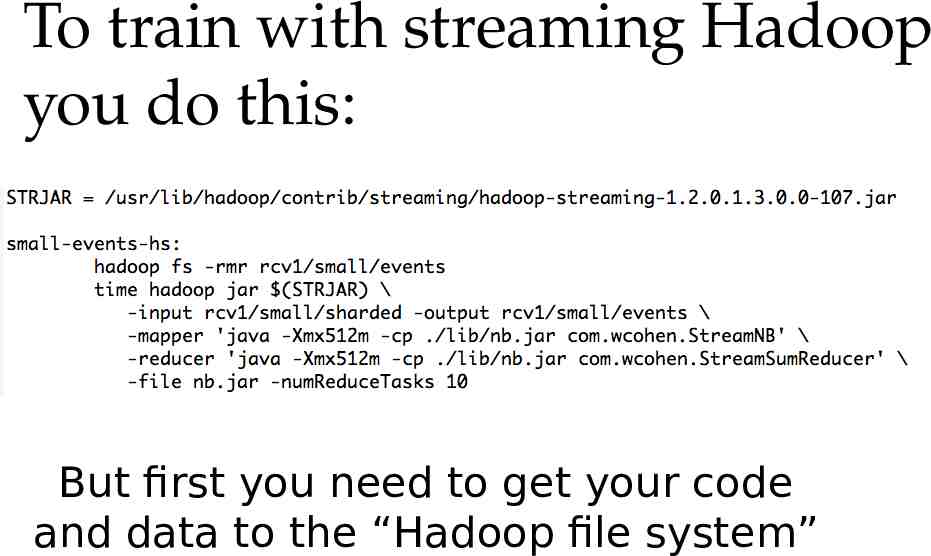

To train with streaming Hadoop you do this: But first you need to get your code and data to the “Hadoop file system”

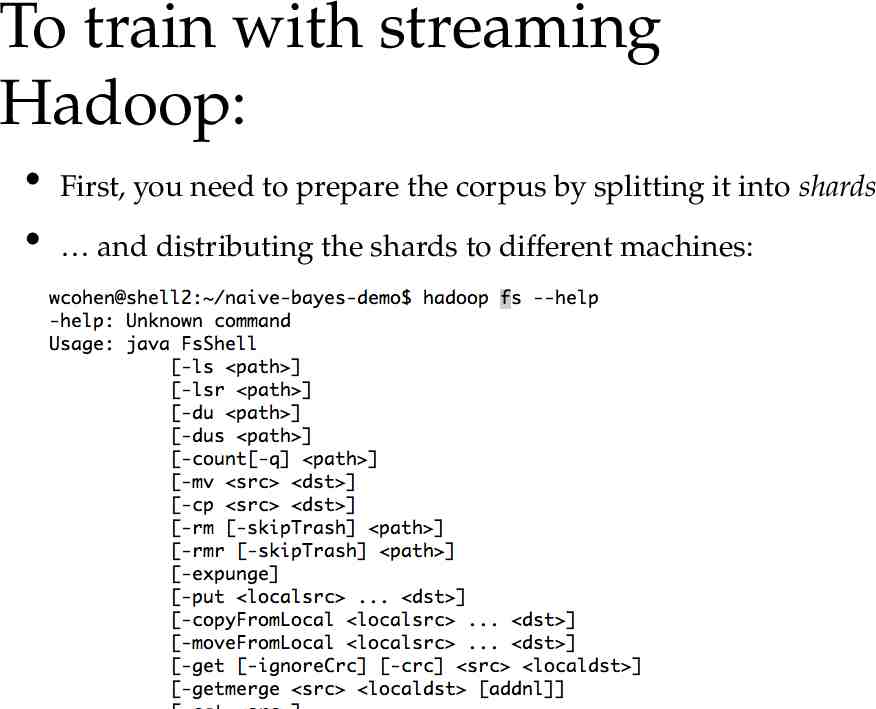

To train with streaming Hadoop: First, you need to prepare the corpus by splitting it into shards and distributing the shards to different machines:

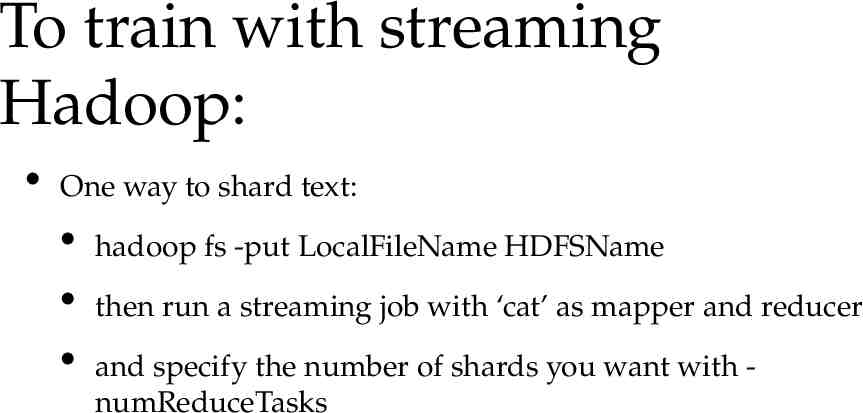

To train with streaming Hadoop: One way to shard text: hadoop fs -put LocalFileName HDFSName then run a streaming job with ‘cat’ as mapper and reducer and specify the number of shards you want with numReduceTasks

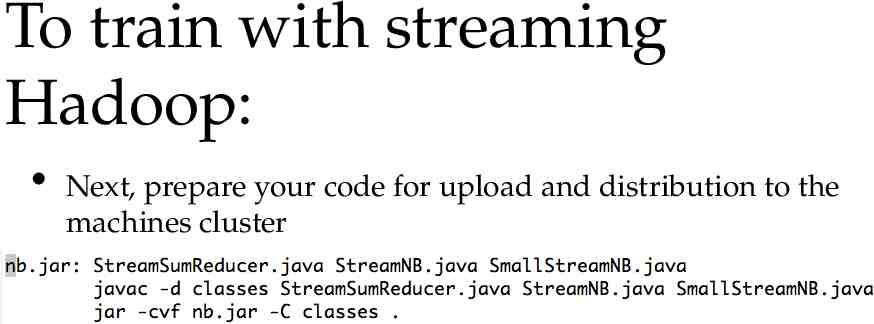

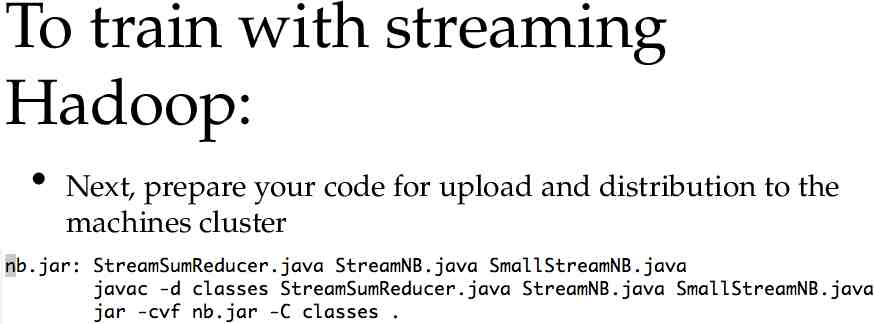

To train with streaming Hadoop: Next, prepare your code for upload and distribution to the machines cluster

To train with streaming Hadoop: Next, prepare your code for upload and distribution to the machines cluster

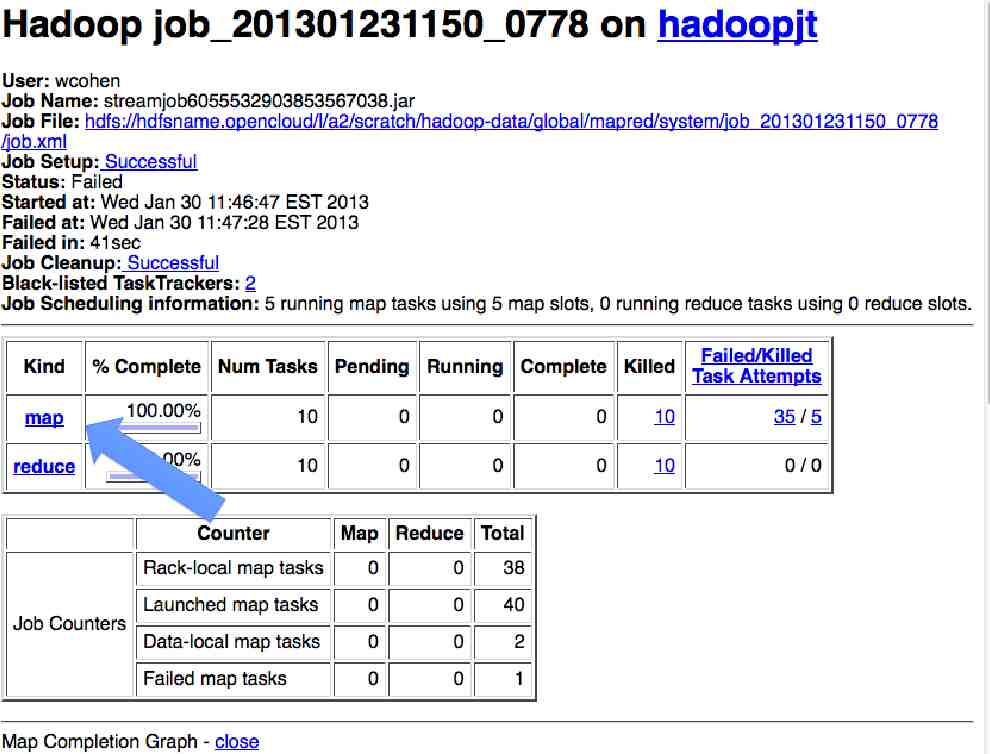

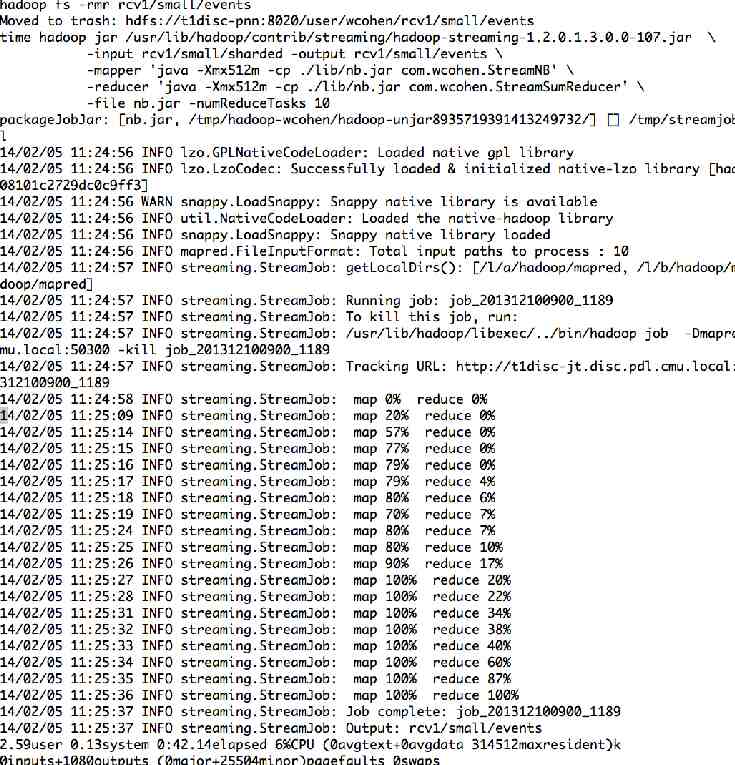

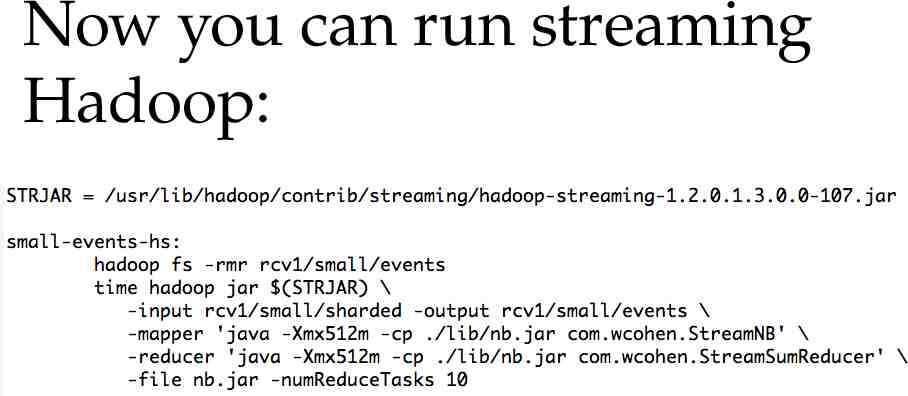

Now you can run streaming Hadoop:

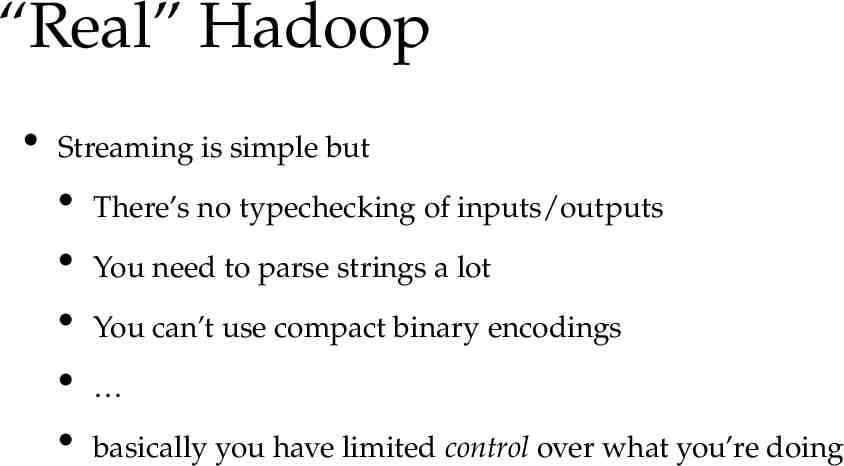

“Real” Hadoop Streaming is simple but There’s no typechecking of inputs/outputs You need to parse strings a lot You can’t use compact binary encodings basically you have limited control over what you’re doing

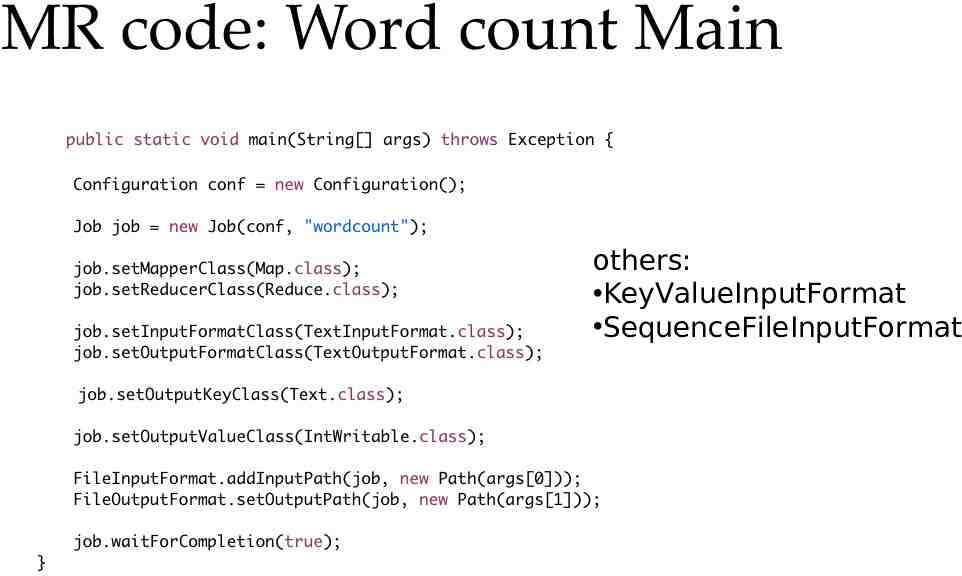

others: KeyValueInputFormat SequenceFileInputFormat

Is any part of this wasteful? Remember - moving data around and writing to/reading from disk are very expensive operations No reducer can start until: all mappers are done data in its partition has been sorted

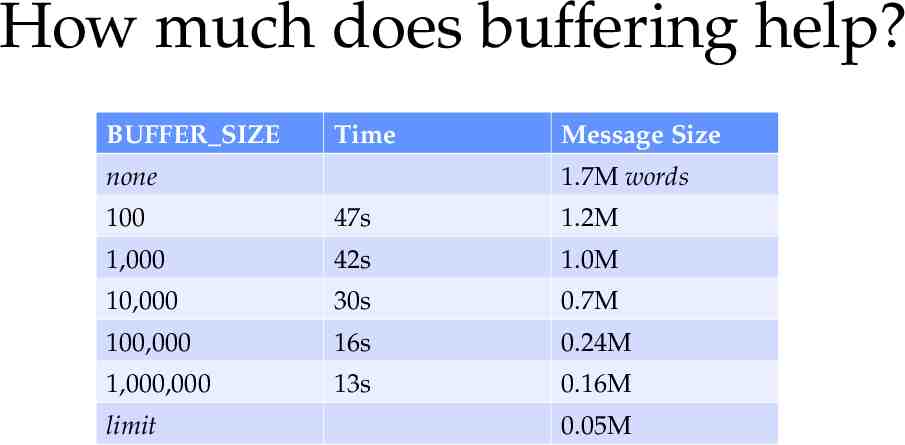

How much does buffering help? BUFFER SIZE Time none Message Size 1.7M words 100 47s 1.2M 1,000 42s 1.0M 10,000 30s 0.7M 100,000 16s 0.24M 1,000,000 13s 0.16M limit 0.05M

Combiners Sits between the map and the shuffle Do some of the reducing while you’re waiting for other stuff to happen Avoid moving all of that data over the network Only applicable when order of reduce values doesn’t matter effect is cumulative

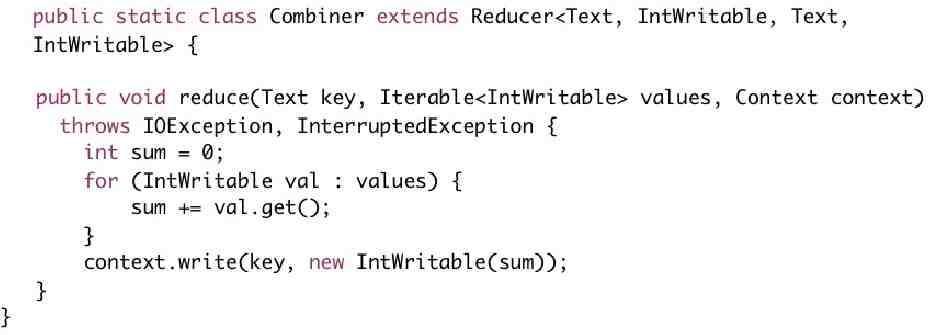

Deja vu: Combiner Reducer Often the combiner is the reducer. like for word count but not always

1. This would be pretty systems-y (remote copy files, waiting for remote processes, ) 2. It would take work to make work for 500 jobs Reliability: Replication, restarts, monitoring jobs, Efficiency: loadbalancing, reducing file/network i/o, optimizing

Some common pitfalls You have no control over the order in which reduces are performed You have “no” control over the order in which you encounter reduce values More on this later The only ordering you should assume is that Reducers always start after Mappers

Some common pitfalls You should assume your Maps and Reduces will be taking place on different machines with different memory spaces Don’t make a static variable and assume that other processes can read it They can’t. It appear that they can when run locally, but they can’t No really, don’t do this.

Some common pitfalls Do not communicate between mappers or between reducers overhead is high there’s no easy way to find out what machine they’re running on you don’t know which mappers/reducers are actually running at any given point because you shouldn’t be looking for them anyway

When mapreduce doesn’t fit The beauty of mapreduce is its separability and independence If you find yourself trying to communicate between processes you’re doing it wrong or what you’re doing is not a mapreduce

When mapreduce doesn’t fit Not everything is a mapreduce Sometimes you need more communication We’ll talk about other programming paradigms later

What’s so tricky about MapReduce? Really, nothing. It’s easy. What’s often tricky is figuring out how to write an algorithm as a series of map-reduce substeps. How and when do you parallelize? When should you even try to do this? when should you use a different model?

Thinking in Mapreduce A new task: Word co-occurrence statistics (simplified) Input: Sentences Output: P(Word B is in sentence Word A started the sentence)

Thinking in mapreduce We need to calculate P(B in sentence A started sentence) P(B in sentence & A started sentence)/P(A started sentence) count A,B /count A,*

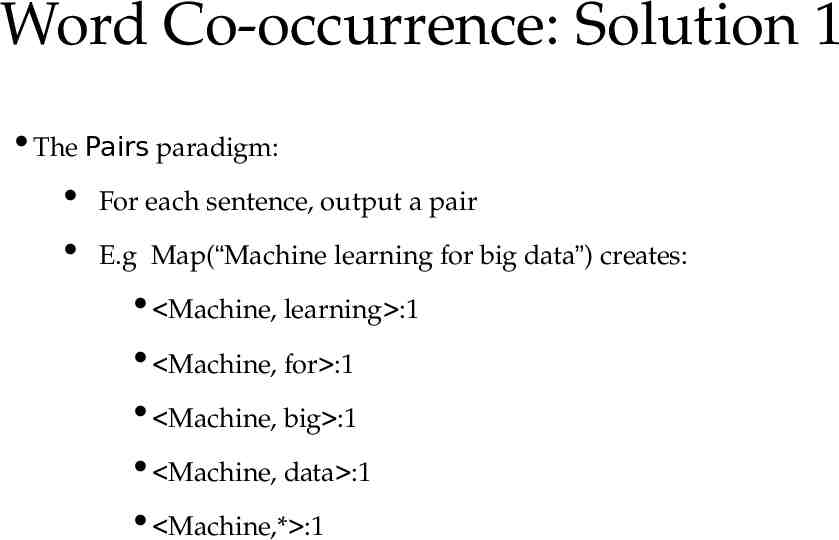

Word Co-occurrence: Solution 1 The Pairs paradigm: For each sentence, output a pair E.g Map(“Machine learning for big data”) creates: Machine, learning :1 Machine, for :1 Machine, big :1 Machine, data :1 Machine,* :1

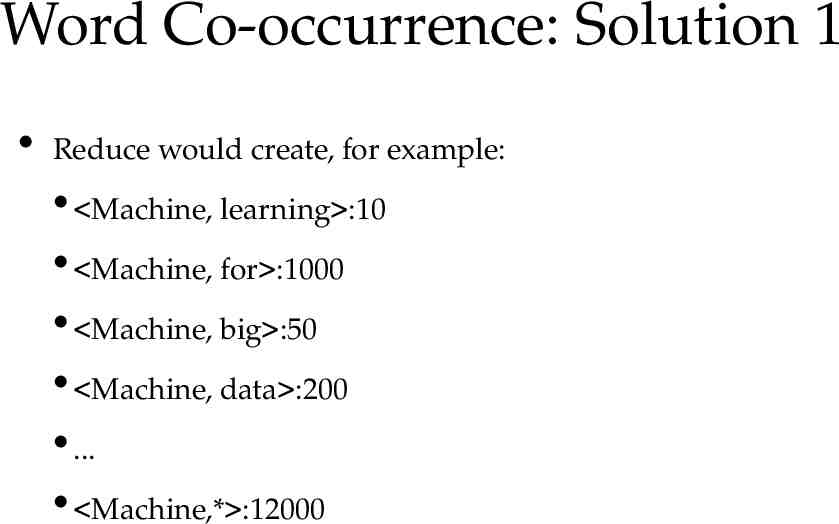

Word Co-occurrence: Solution 1 Reduce would create, for example: Machine, learning :10 Machine, for :1000 Machine, big :50 Machine, data :200 . Machine,* :12000

Word Co-occurrence: Solution 1 P(B in sentence A started sentence) P(B in sentence & A started sentence)/P(A started sentence) A,B / A,* Do we have what we need? Yes!

Word Co-occurrence: Solution 1 But wait! There’s a problem . can you see it?

Word Co-occurrence: Solution 1 Each reducer will process all counts for a word1,word2 pair The information is in different reducers! We need to know word1,* at the same time as word1,word2

Word Co-occurrence: Solution 1 Solution 1 a) Make the first word the reduce key Each reducer has: key: word i values: word i,word j . word i,word b . word i,* .

Word Co-occurrence: Solution 1 Now we have all the information in the same reducer But, now we have a new problem, can you see it? Hint: remember - we have no control over the order of values

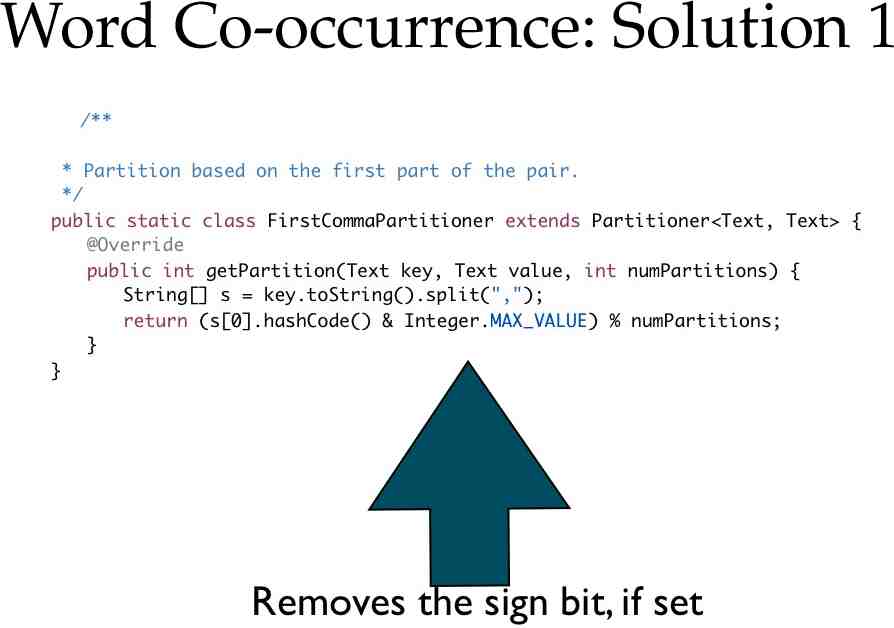

Word Co-occurrence: Solution 1 There could be too many values to hold in memory We need word i,* to be the first value we encounter Solution 1 b): Keep word i,word j as the reduce key Change the way Hadoop does its partitioning.

Word Co-occurrence: Solution 1

Word Co-occurrence: Solution 1 Ok cool, but we still have the same problem. The information is all in the same reducer, but we don’t know the order But now, we have all the information we need in the reduce key!

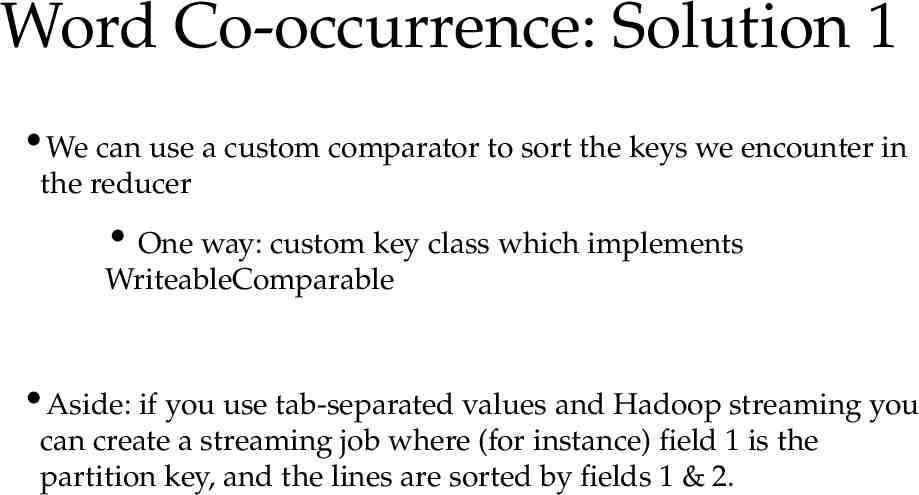

Word Co-occurrence: Solution 1 We can use a custom comparator to sort the keys we encounter in the reducer One way: custom key class which implements WriteableComparable Aside: if you use tab-separated values and Hadoop streaming you can create a streaming job where (for instance) field 1 is the partition key, and the lines are sorted by fields 1 & 2.

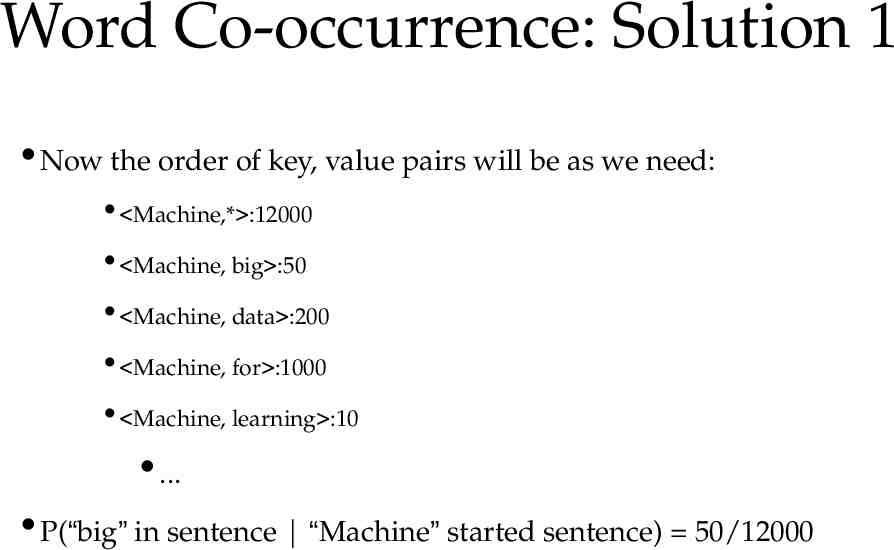

Word Co-occurrence: Solution 1 Now the order of key, value pairs will be as we need: Machine,* :12000 Machine, big :50 Machine, data :200 Machine, for :1000 Machine, learning :10 . P(“big” in sentence “Machine” started sentence) 50/12000

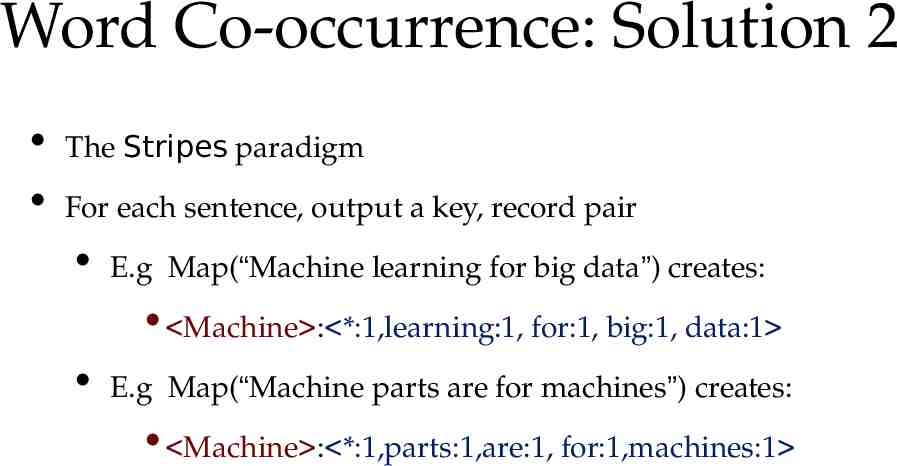

Word Co-occurrence: Solution 2 The Stripes paradigm For each sentence, output a key, record pair E.g Map(“Machine learning for big data”) creates: Machine : *:1,learning:1, for:1, big:1, data:1 E.g Map(“Machine parts are for machines”) creates: Machine : *:1,parts:1,are:1, for:1,machines:1

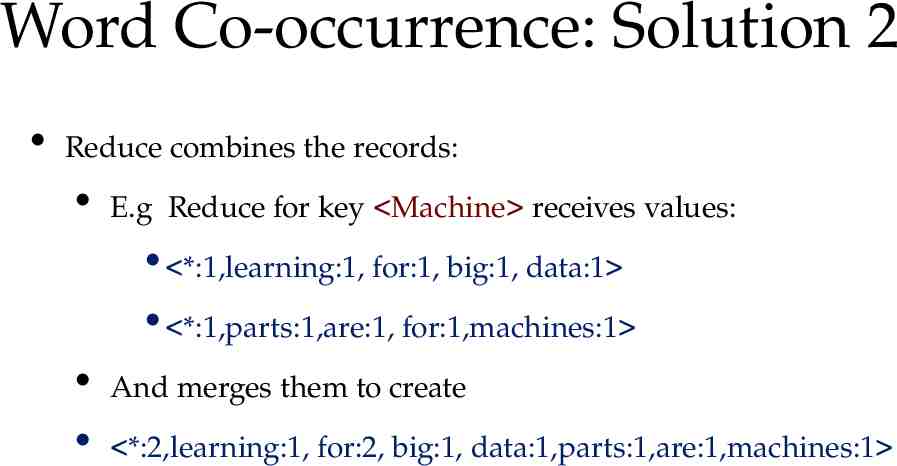

Word Co-occurrence: Solution 2 Reduce combines the records: E.g Reduce for key Machine receives values: *:1,learning:1, for:1, big:1, data:1 *:1,parts:1,are:1, for:1,machines:1 And merges them to create *:2,learning:1, for:2, big:1, data:1,parts:1,are:1,machines:1

Word Co-occurrence: Solution 2 This is nice because we have the * count already created we just have to ensure it always occurs first in the record

Word Co-occurrence: Solution 2 There is a really big (ha ha) problem with this solution Can you see it? The value may become too large to fit in memory

Performance IMPORTANT You may not have room for all reduce values in memory In fact you should PLAN not to have memory for all values Remember, small machines are much cheaper you have a limited budget

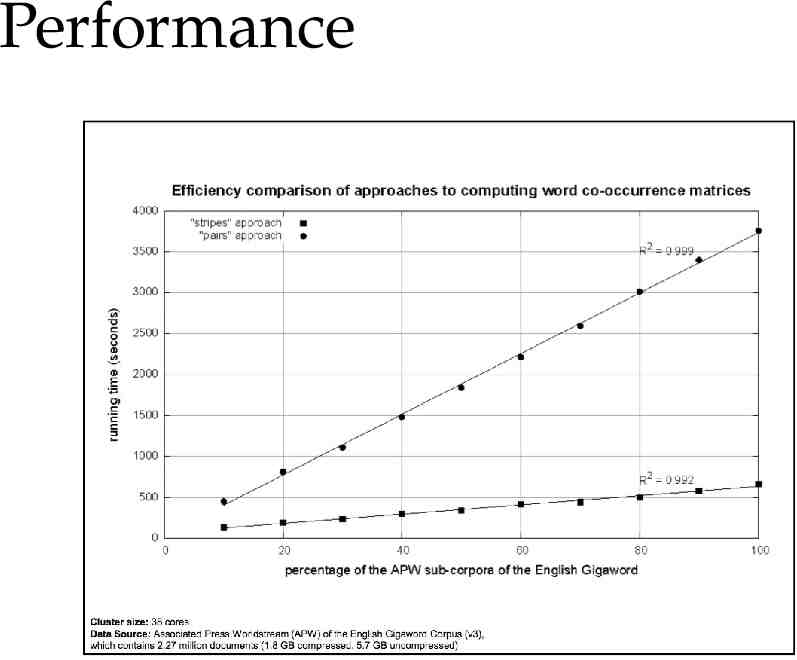

Performance Which is faster, stripes vs pairs? Stripes has a bigger value per key Pairs has more partition/sort overhead

Performance

Conclusions Mapreduce Can handle big data Real algorithms are typically a sequence of map-reduce steps Requires minimal code-writing